5 Foundations for inference

Data and data sets are not objective; they are creations of human design. We give numbers their voice, draw inferences from them, and define their meaning through our interpretations. —Katie Crawford

Inferential statistics is the branch of statistics concerned with drawing conclusions about populations or causal relationships from sample data. It provides the tools to answer questions like: did our manipulation actually cause a change, or could the pattern we observed have arisen by random chance alone?

This chapter builds the conceptual foundations for inference using simulation — no formulas yet. The goal is to make the underlying logic explicit and visible before it gets formalized in later chapters.

5.1 Brief review of Experiments

A controlled experiment is a structured method of data collection designed to support inferences about causality. In environmental science, experiments involve deliberately manipulating one condition while holding others constant, then measuring the response.

Every experiment has two core components. The independent variable is the condition under experimental control — the manipulation. The dependent variable is what is measured. For example, a researcher studying the effects of wastewater discharge on stream health might compare dissolved oxygen levels at sites downstream of a discharge point against levels at unaffected reference sites. Distance from discharge (affected vs. reference) is the independent variable; dissolved oxygen is the dependent variable.

Causal forces produce detectable change. If the discharge is genuinely reducing dissolved oxygen, then measurements from affected sites should differ systematically from measurements at reference sites. The experiment is designed to detect that change.

The central question becomes: how do we know if a measured difference between conditions reflects a real causal effect? The short answer is that data always contain variability — measurements fluctuate even when nothing has changed. To decide whether a difference is real, we need tools that can distinguish meaningful change from background noise.

5.2 The data came from a distribution

Understanding where data come from is foundational to inference. This section returns to sampling and distributions from that perspective — not just to describe them, but to ask what they reveal about the process that generated our observations.

A distribution specifies what numbers are likely to occur and how often. It sets the constraints on what we can expect to see when we take measurements. Distributions are abstract, but they can be visualized directly with histograms.

5.2.1 Uniform distribution

As a reminder from last chapter, Figure 5.1 shows that the shape of a uniform distribution is completely flat.

The y-axis shows probability (0 to 1) and the x-axis shows the values 1 through 10. The flat horizontal line indicates that every value from 1 to 10 has an equal probability of occurring: 1/10 = 0.1. This is the defining property of a uniform distribution — no value is more likely than any other.

Think of the uniform distribution as a number-generating process. If it generates numbers repeatedly, it produces each value with equal frequency: roughly 10% 1s, 10% 2s, and so on. Table 5.1 shows 100 numbers sampled from this distribution.

| 8 | 8 | 6 | 2 | 10 | 8 | 8 | 6 | 10 | 4 |

| 5 | 2 | 9 | 6 | 4 | 8 | 5 | 9 | 1 | 7 |

| 9 | 2 | 8 | 4 | 5 | 10 | 4 | 2 | 9 | 8 |

| 2 | 4 | 9 | 1 | 6 | 5 | 2 | 2 | 2 | 5 |

| 9 | 3 | 8 | 5 | 6 | 8 | 9 | 10 | 8 | 4 |

| 10 | 4 | 5 | 9 | 10 | 7 | 6 | 10 | 6 | 9 |

| 10 | 9 | 5 | 7 | 4 | 1 | 4 | 5 | 9 | 1 |

| 8 | 1 | 2 | 5 | 6 | 9 | 9 | 7 | 1 | 6 |

| 7 | 9 | 4 | 6 | 7 | 2 | 3 | 8 | 2 | 9 |

| 3 | 9 | 7 | 6 | 10 | 9 | 5 | 9 | 10 | 4 |

We used the uniform distribution to generate these numbers. Formally, this is called sampling from a distribution. Drawing a subset of observations from a larger process or population is sampling; if you could observe every possible value the process could produce, you would have the population. We will return to the distinction between samples and populations throughout the course.

Because we used the uniform distribution to create numbers, we already know where our numbers came from. However, we can still pretend for the moment that someone showed up at your door, showed you these numbers, and then you wondered where they came from. Can you tell just by looking at these numbers that they came from a uniform distribution? What would need to look at? Perhaps you would want to know if all of the numbers occur with roughly equal frequency, after all they should have right? That is, if each number had the same chance of occurring, we should see that each number occurs roughly the same number of times.

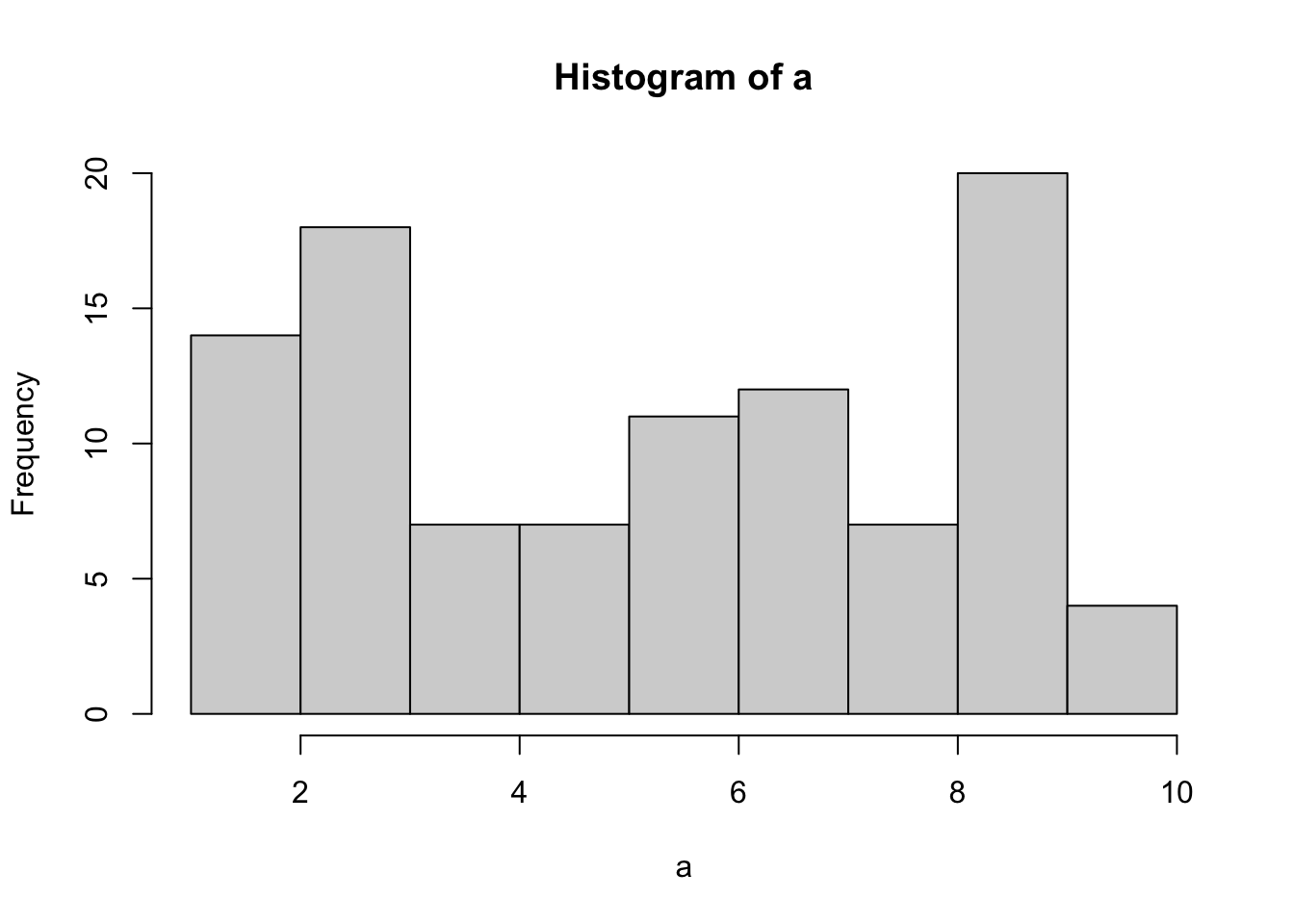

We already know what a histogram is, so we can put our sample of 100 numbers into a histogram and see what the counts look like. If all of the numbers from 1 to 10 occur with equal frequency, then each individual number should occur about 10 times. Figure 5.2 shows the histogram:

As the histogram shows, not all numbers occurred exactly 10 times. The bars vary in height even though all values had equal probability. This is a direct illustration of sampling variability: random samples do not perfectly reproduce the distribution they came from. The discrepancy between what we observe and what the distribution predicts is called sampling error.

5.2.2 Not all samples are the same, they are usually quite different

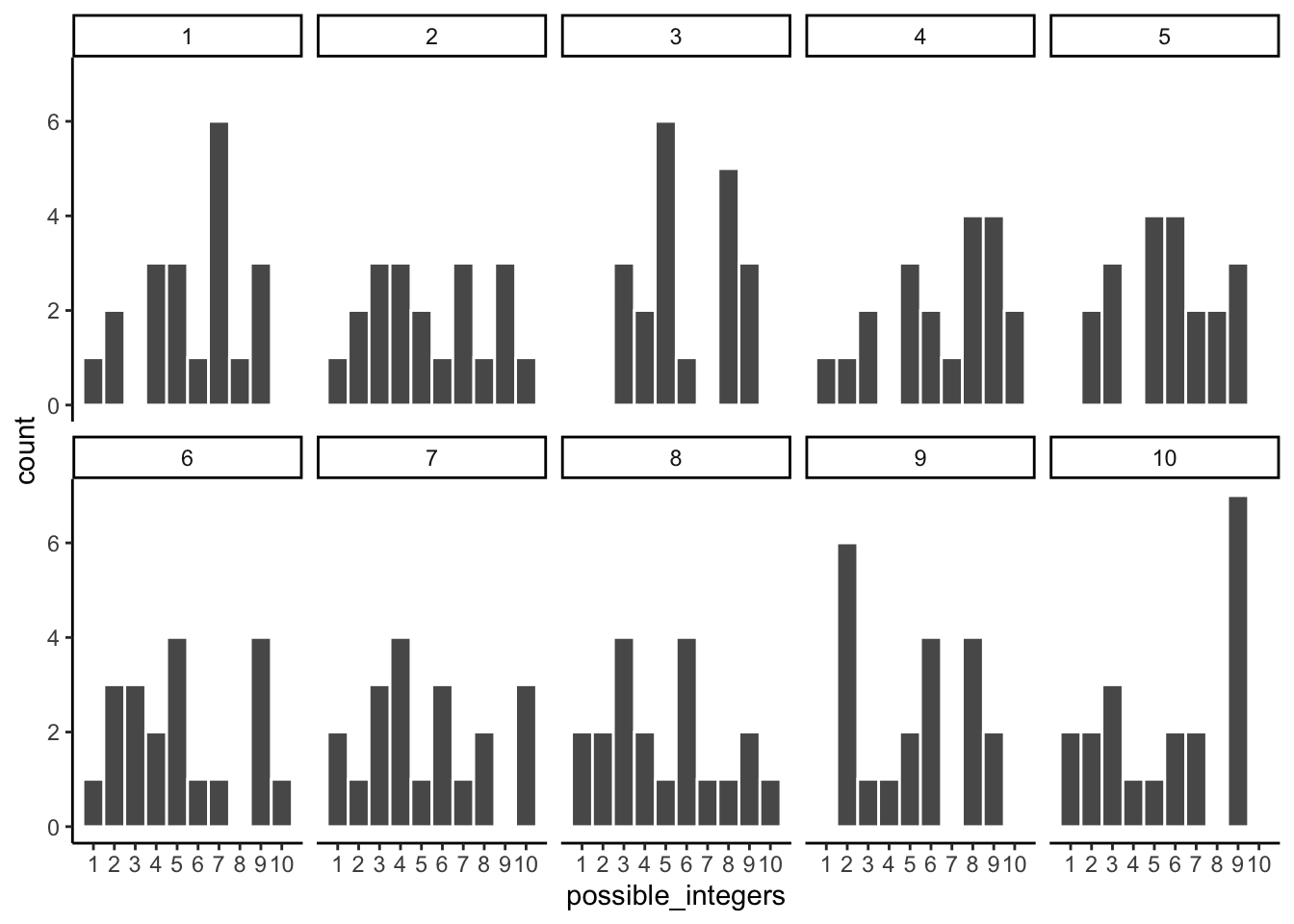

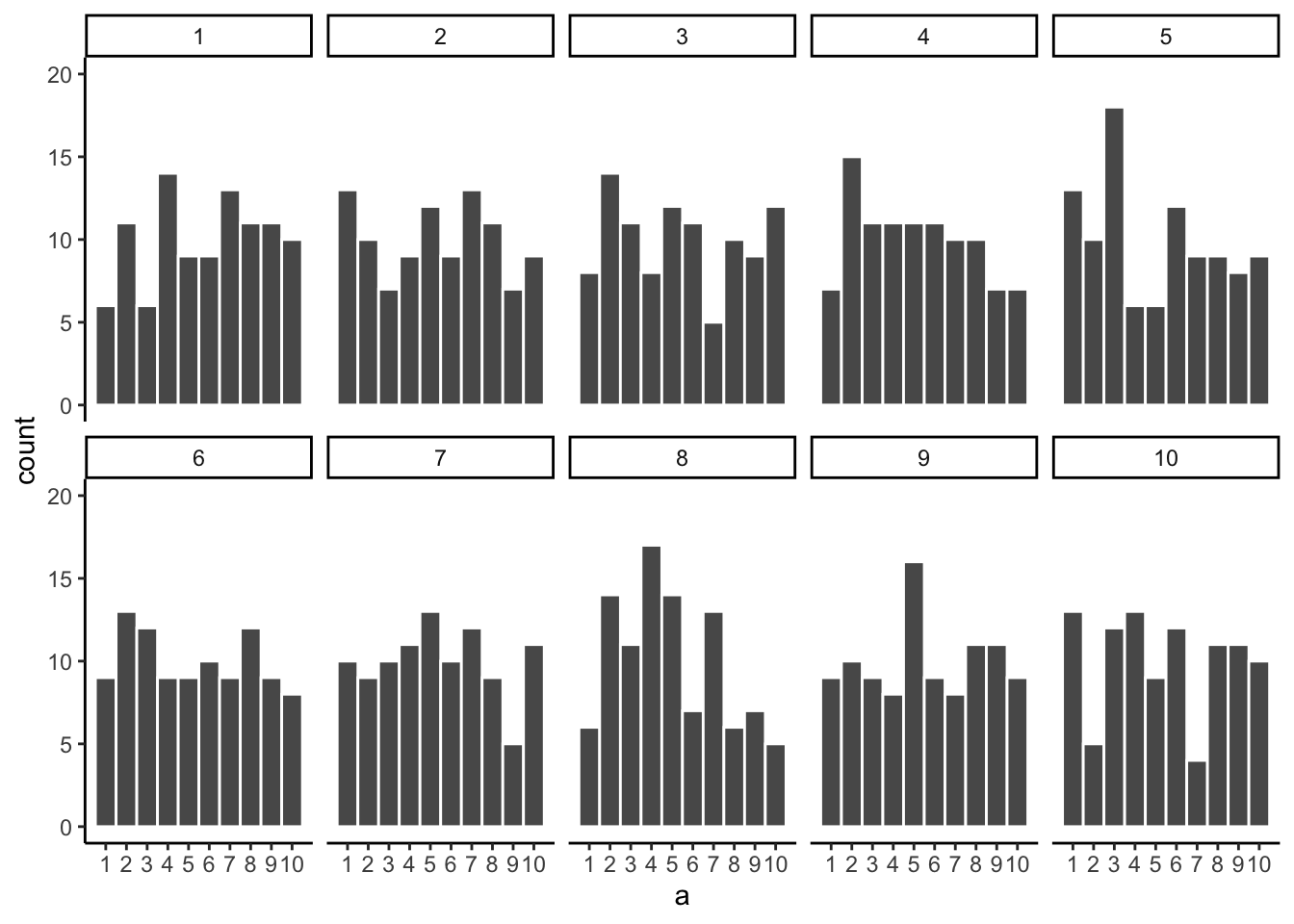

Let’s look at sampling error more closely. We will sample 20 numbers from the uniform distribution. We should expect that each number between 1 and 10 occurs about two times each. As before, this expectation can be visualized in a histogram. To get a better sense of sampling error, let’s repeat the above process ten times. Figure 5.3 has 10 histograms, each showing what 10 different samples of twenty numbers looks like:

You might notice right away that none of the histograms are the same. Even though we are randomly taking 20 numbers from the very same uniform distribution, each sample of 20 numbers comes out different. This is sampling variability, or sampling error.

Figure 5.4 shows an animated version of the process of repeatedly choosing 20 new random numbers and plotting a histogram. The horizontal line shows the flat-line shape of the uniform distribution. The line crosses the y-axis at 2; and, we expect that each number (from 1 to 10) should occur about 2 times each in a sample of 20. However, each sample bounces around quite a bit, due to random chance.

Small samples show high variability because random chance can produce very uneven counts even when every value is equally likely. This variability is sampling error, and it makes it harder to identify the true distribution from a single sample.

5.2.3 Large samples are more like the distribution they came from

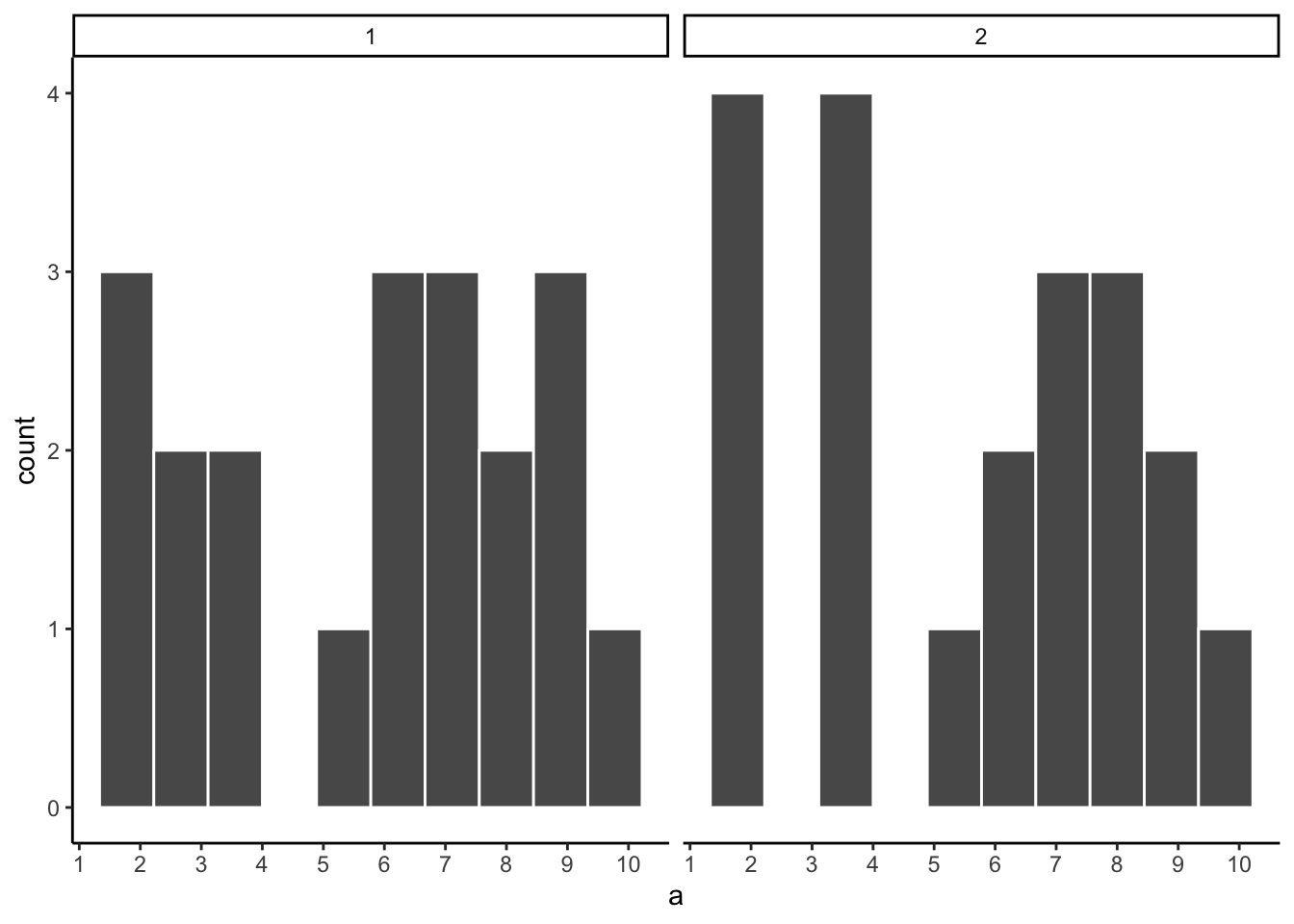

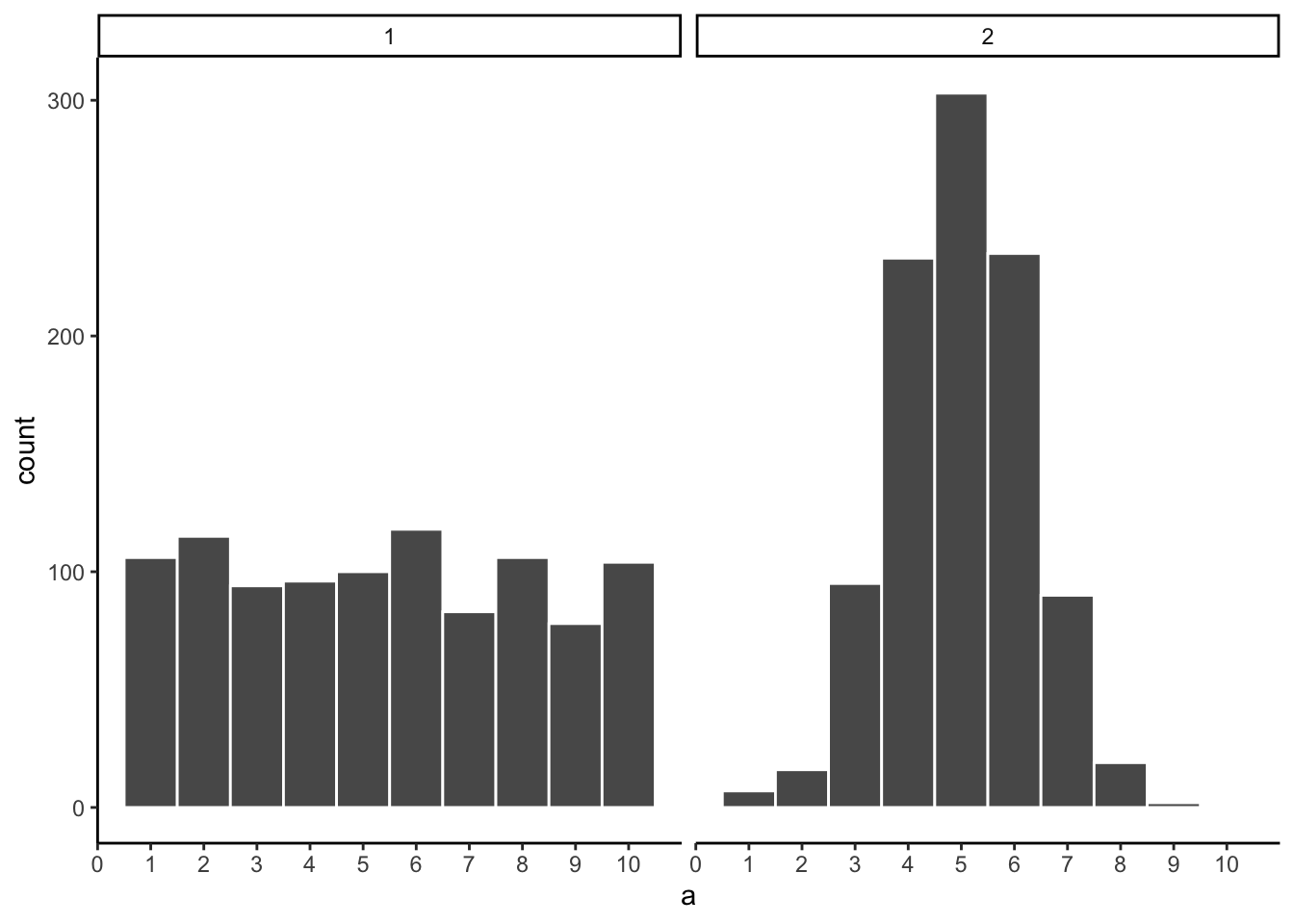

Let’s refresh the question. Which of the two samples in Figure 5.5 do you think came from a uniform distribution?

Both samples came from the uniform distribution — yet neither histogram looks perfectly flat. This illustrates how sampling error can obscure the true distribution, especially at small sample sizes.

Can we improve things, and make it easier to see if a sample came from a uniform distribution? Yes, we can. All we need to do is increase the sample-size. We will often use the letter n to refer to sample-size. N is the number of observations in the sample.

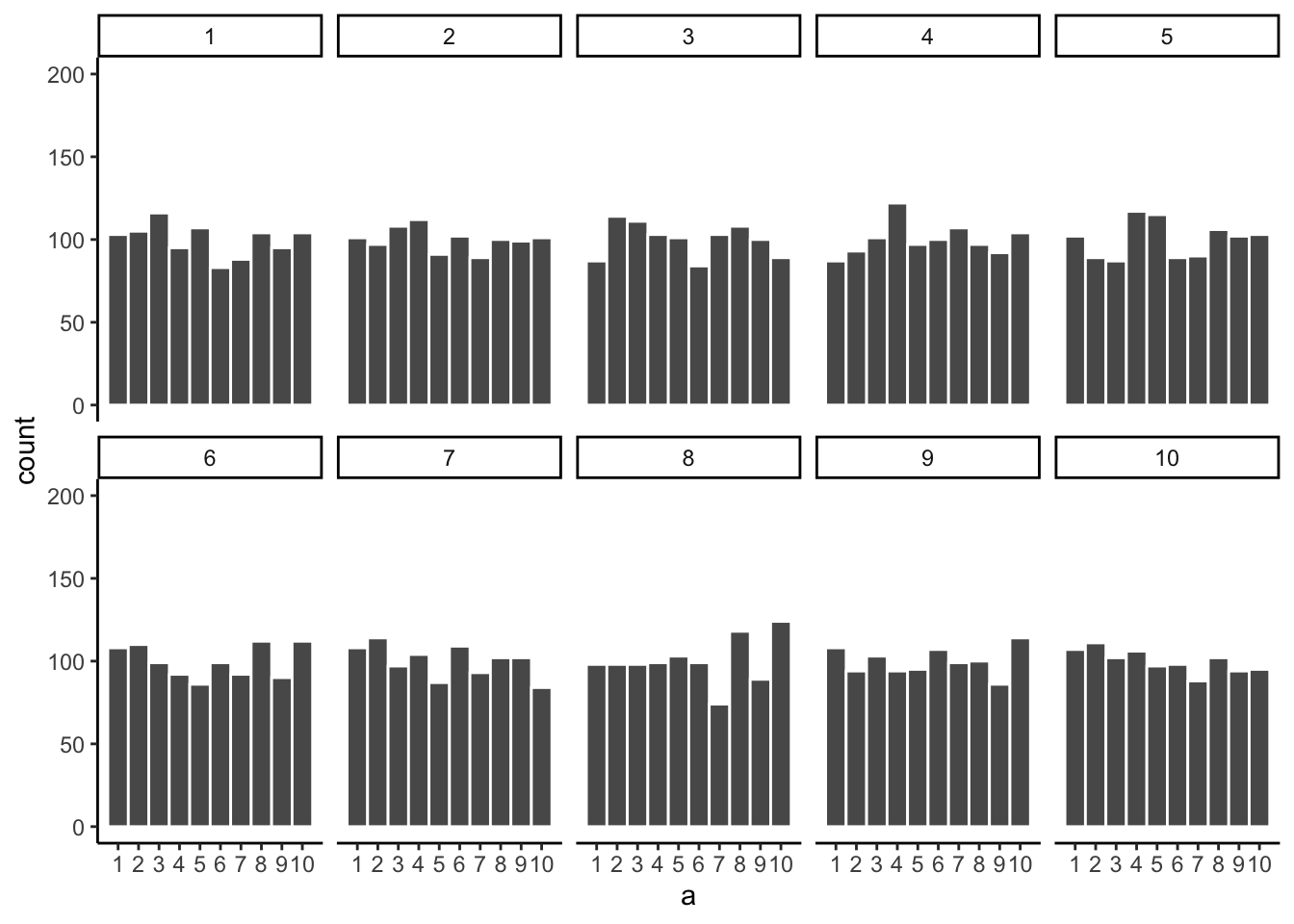

So let’s increase the number of observations in each sample from 20 to 100. We will again create 10 samples (each with 100 observations), and make histograms for each of them. All of these samples will be drawn from the very same uniform distribution. This, means we should expect each number from 1 to 10 to occur about 10 times in each sample. The histograms are shown in Figure 5.6.

Again, most of these histograms don’t look very flat, and all of the bars seem to be going up or down, and they are not exactly at 10 each. So, we are still dealing with sampling error. It’s a pain. It’s always there.

Let’s bump up the \(N\) from 100 to 1000 observations per sample. Now we should expect every number to appear about 100 times each. What happens?

Figure 5.7 shows the histograms are starting to flatten out. The bars are still not perfectly at 100, because there is still sampling error (there always will be). But, if you found a histogram that looked flat and knew that the sample contained many observations, you might be more confident that those numbers came from a uniform distribution.

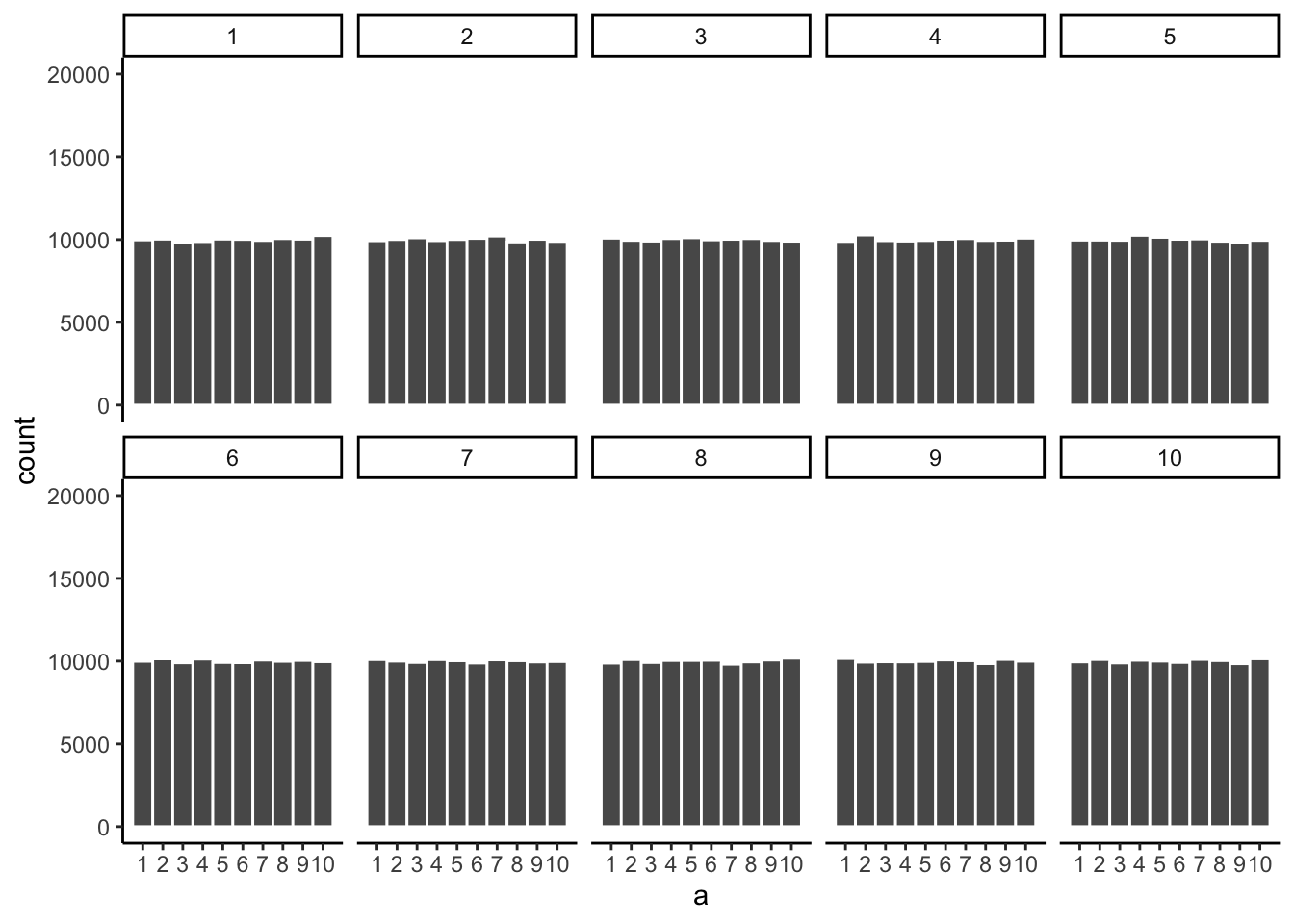

Just for fun let’s make the samples really big. Say 100,000 observations per sample. Here, we should expect that each number occurs about 10,000 times each. What happens?

Figure 5.8 shows that the histograms for each sample are starting to look the same. They all have 100,000 observations, and this gives chance enough opportunity to equally distribute the numbers, roughly making sure that they all occur very close to the same amount of times. As you can see, the bars are all very close to 10,000, which is where they should be if the sample came from a uniform distribution.

The pattern behind a sample will tend to stabilize as sample-size increases. Small samples will have all sorts of patterns because of sampling error (chance).

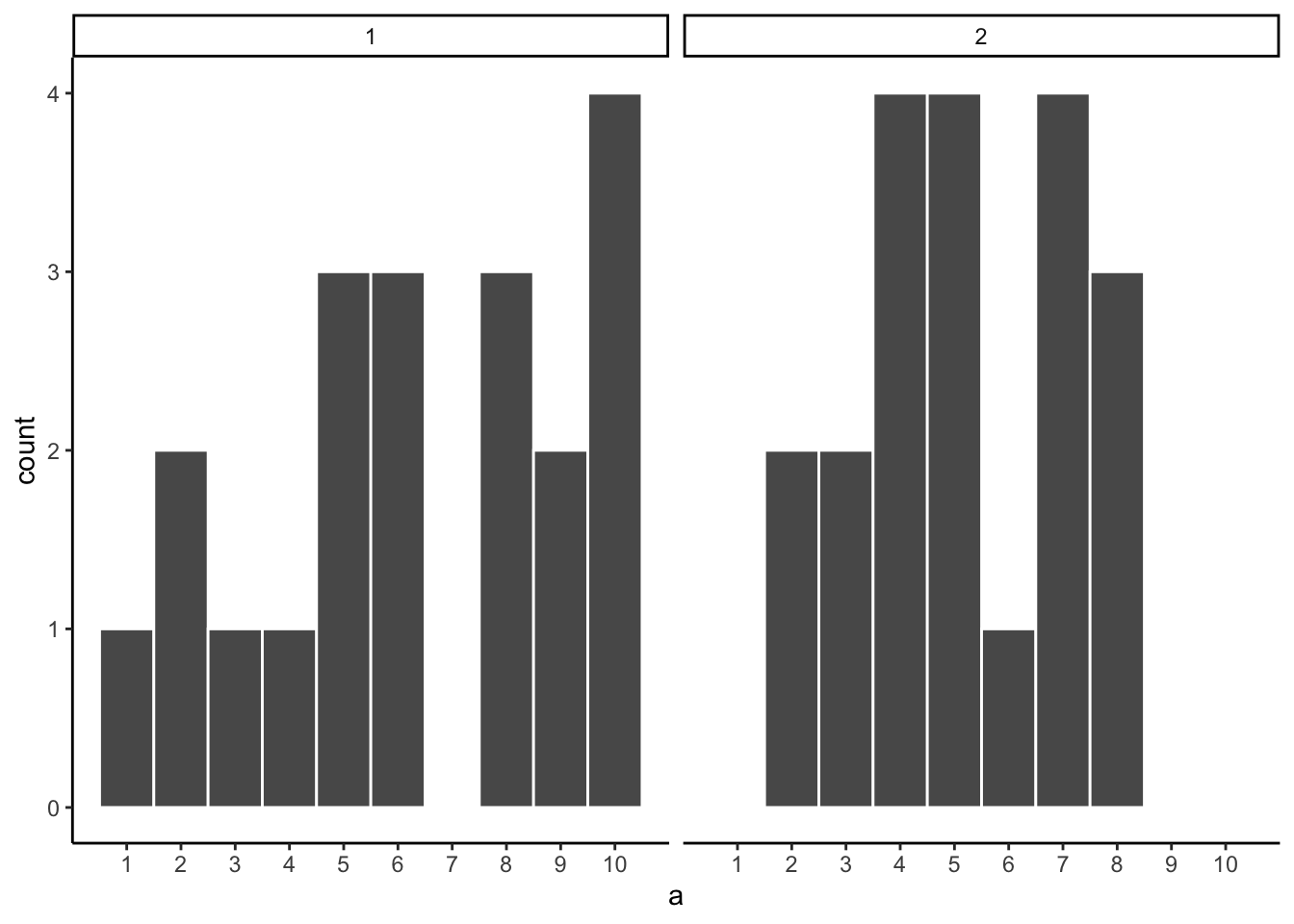

Before getting back to the topic of experiments that we started with, let’s ask two more questions. First, which of the two samples in Figure 5.9 do you think came from a uniform distribution? FYI, each of these samples had 20 observations each.

If you are not confident in the answer, this is because sampling error (randomness) is fuzzing with the histograms.

Here is the very same question, only this time we will take 1,000 observations for each sample. Which histogram in Figure 5.10 do you think came from a uniform distribution, which one did not?

Now that we have increased N, we can see the pattern in each sample becomes more obvious. The histogram for sample 1 has bars near 100, not perfectly flat, but it resembles a uniform distribution. The histogram for sample 2 is not flat looking at all.

By comparing the two histograms, we have already performed a basic statistical inference — we inferred that sample 2 did not come from a uniform distribution. This is the same logic underlying the formal tests covered in later chapters: arrange the data to make the source distribution visible, then judge whether the observed pattern is consistent with a hypothesized one.

5.3 Is there a difference?

Let’s get back to experiments. In an experiment we want to know if an independent variable (our manipulation) causes a change in a dependent variable (measurement). If this occurs, then we will expect to see some differences in our measurement as a function of the manipulation.

Consider the light switch example:

Light Switch Experiment: You manipulate the switch up (condition 1 of independent variable), light goes on (measurement). You manipulate the switch down (condition 2 of independent variable), light goes off (another measurement). The measurement (light) changes (goes off and on) as a function of the manipulation (moving switch up or down).

You can see the change in measurement between the conditions, it is as obvious as night and day. So, when you conduct a manipulation, and can see the difference (change) in your measure, you can be pretty confident that your manipulation is causing the change.

note: to be cautious we can say “something” about your manipulation is causing the change, it might not be what you think it is if your manipulation is very complicated and involves lots of moving parts.

5.3.1 Chance can produce differences

Random chance can produce apparent differences between groups even when no real difference exists. We have already seen this with sampling: two samples from the same distribution come out differently. The same principle applies when we compare group means. This is a fundamental problem for inference.

To make this concrete, consider a scenario where we expect to find no real difference. A researcher samples pH at 10 randomly selected sites from a stream network. All 10 sites are drawn from the same population — there is no treatment, no environmental gradient, no systematic difference between them. The sites are arbitrarily divided into two groups of 5 (Group A and Group B). Even though there is no real difference, we might still observe a difference in mean pH between the two groups simply because of sampling error.

Here is data from one run of this scenario:

| group | ph |

|---|---|

| A | 7.21 |

| A | 5.85 |

| A | 7.88 |

| A | 7.01 |

| A | 7.25 |

| B | 6.78 |

| B | 7.85 |

| B | 6.64 |

| B | 6.88 |

| B | 7.48 |

This is a long-format table. Each row is one site. The first column shows the group assignment, and the second shows the pH reading. Did Group A have a higher mean pH than Group B?

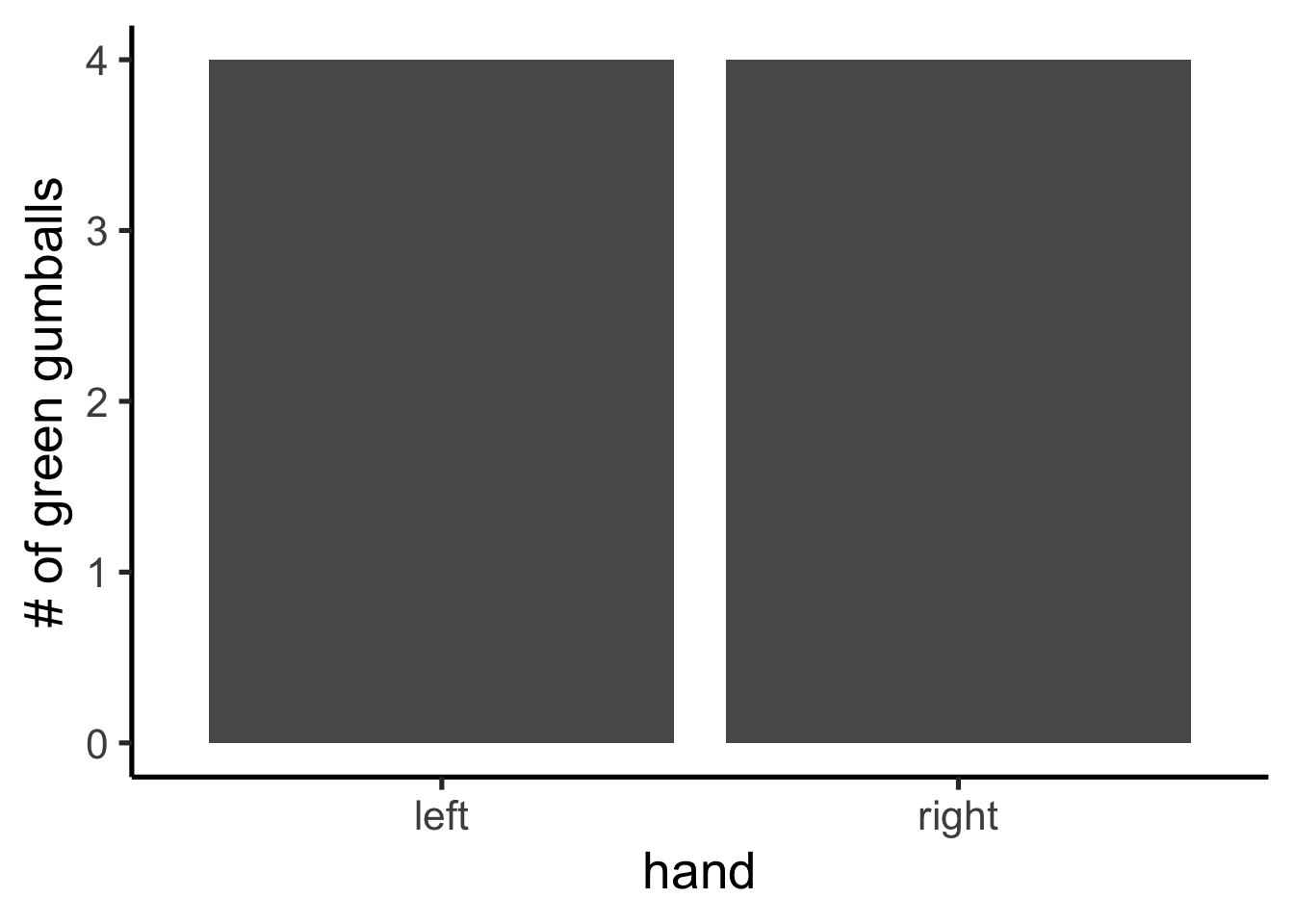

It is easier to see the comparison in a bar graph (Figure 5.11), which shows the mean pH for each group.

The bars are not the same height. Group A and Group B have different mean pH values. Does this mean there is a real environmental difference between the groups? No — both groups were drawn from the same population. The difference is an artifact of sampling error. This is the core inference problem: differences appear even when nothing real caused them. How can we tell whether an observed difference is real or just the result of chance?

5.3.2 Differences due to chance can be simulated

Just as we showed that chance can produce spurious correlations with limited reach, we can characterize exactly what chance is capable of producing in a comparison of group means. Once we know what chance typically does — and what it cannot do — we have a basis for judging whether our observed difference falls within or outside its range.

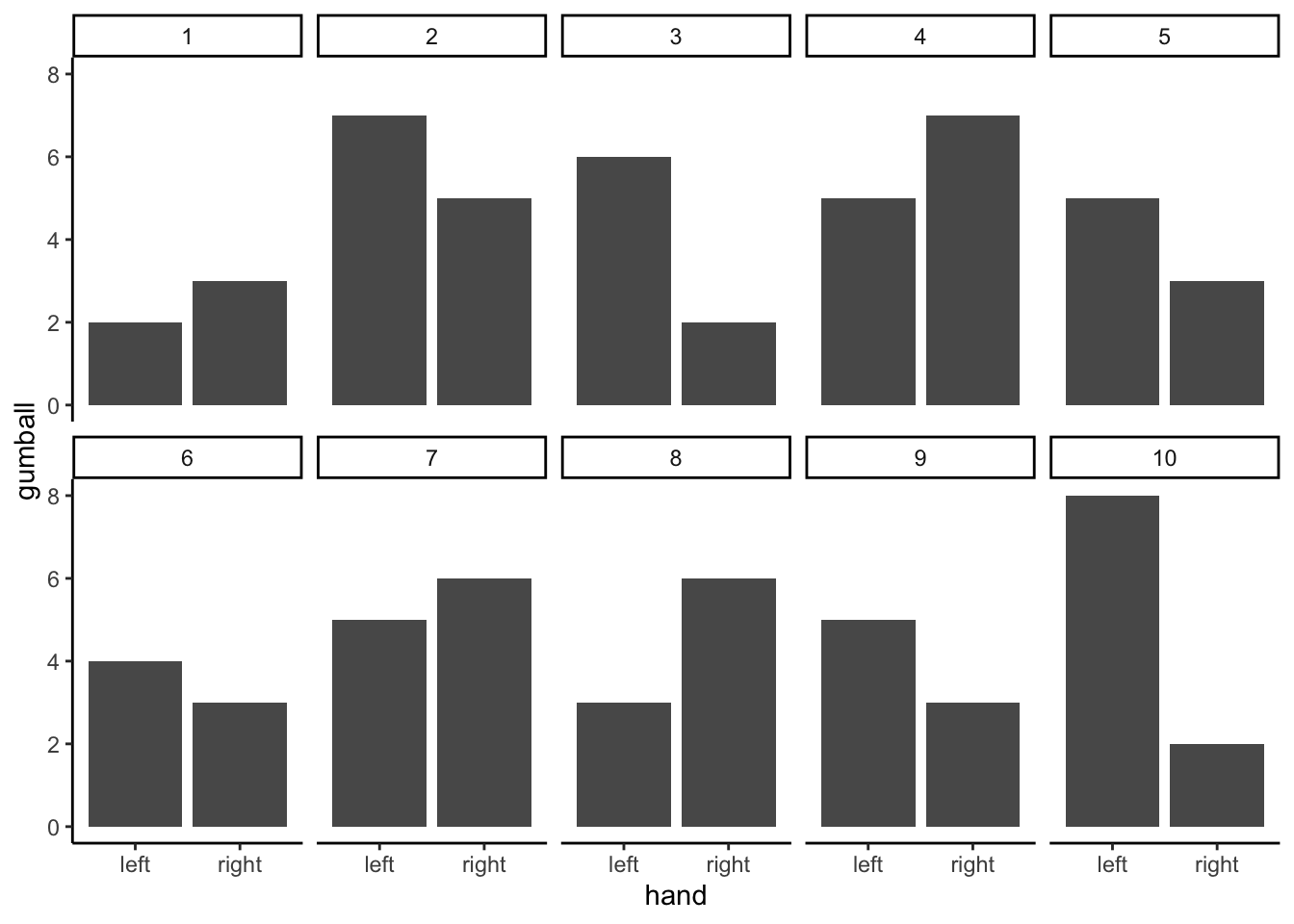

The first step is to replicate the sampling scenario many times. Figure 5.12 shows bar graphs of mean pH for Group A and Group B across 10 replications of the same experiment — all 10 sites sampled from the same population, split 5+5, and means computed.

These 10 replications show that chance produces different mean differences each time. Sometimes Group A is higher, sometimes Group B is higher, sometimes the means are nearly equal. All of this variation arises from sampling error alone — there is no real difference in the population.

5.4 Chance makes some differences more likely than others

We have seen that chance can produce group mean differences. But we still need to characterize what chance usually does and what it rarely does. If we can establish the range of differences that chance produces, we can build a window: differences inside the window could plausibly have arisen by chance; differences outside the window could not.

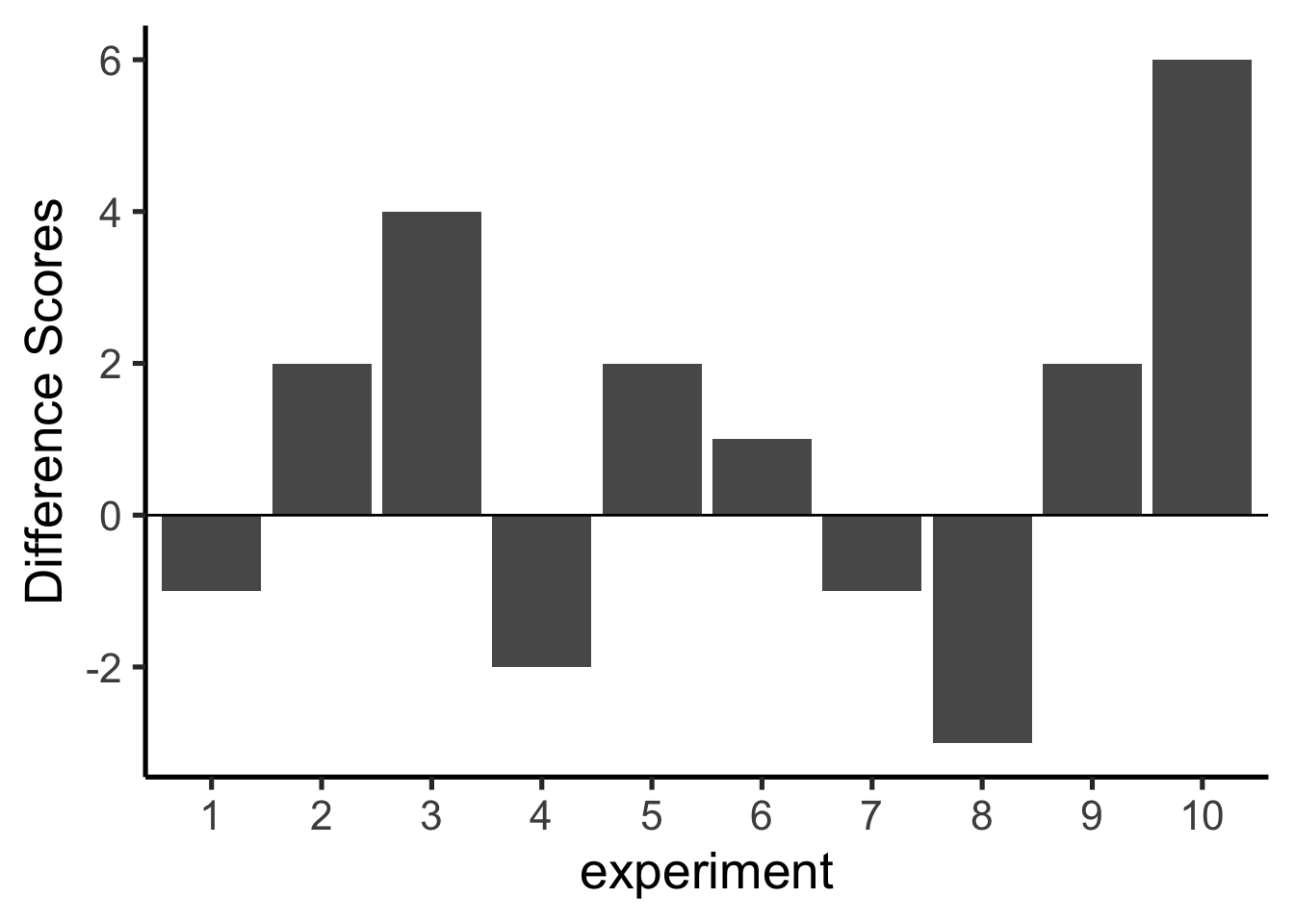

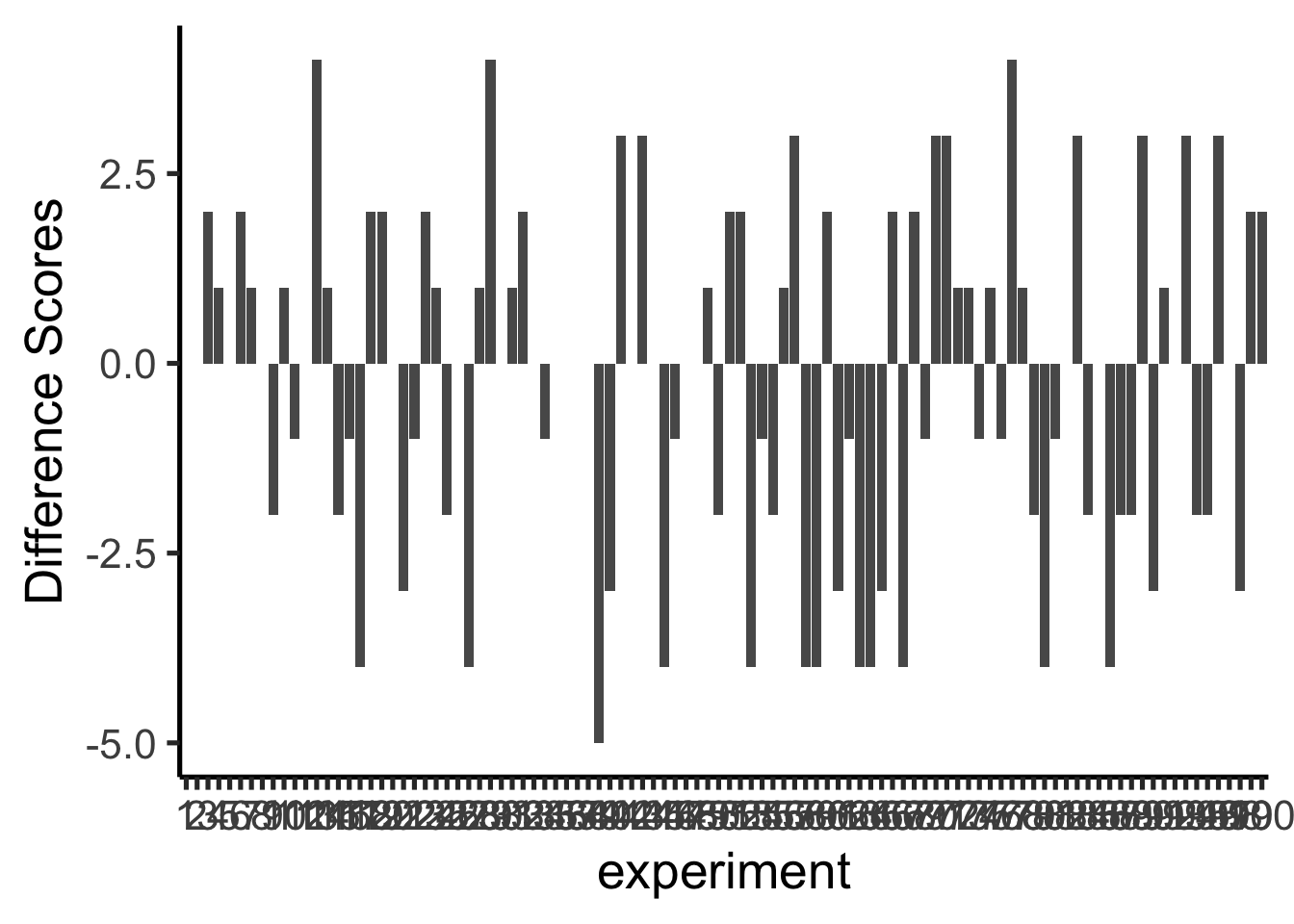

We summarize each replication as a single difference score: mean pH for Group A minus mean pH for Group B. Figure 5.13 shows those difference scores for the 10 replications above.

A bar at zero means the two groups had the same mean pH in that replication. Positive values indicate Group A was higher; negative values indicate Group B was higher. The signs of the differences would flip if we reversed the subtraction order.

The differences in these 10 replications mostly fall in a narrow range. To better characterize what chance can do, we run 100 replications. The results are shown in Figure 5.14.

The x-axis spans 100 experiments and is difficult to read, but the important information is on the y-axis. Many different difference sizes occur, but the range is bounded — very large differences (say, greater than 0.5 pH units in either direction) do not appear. This begins to define the window of what chance can produce.

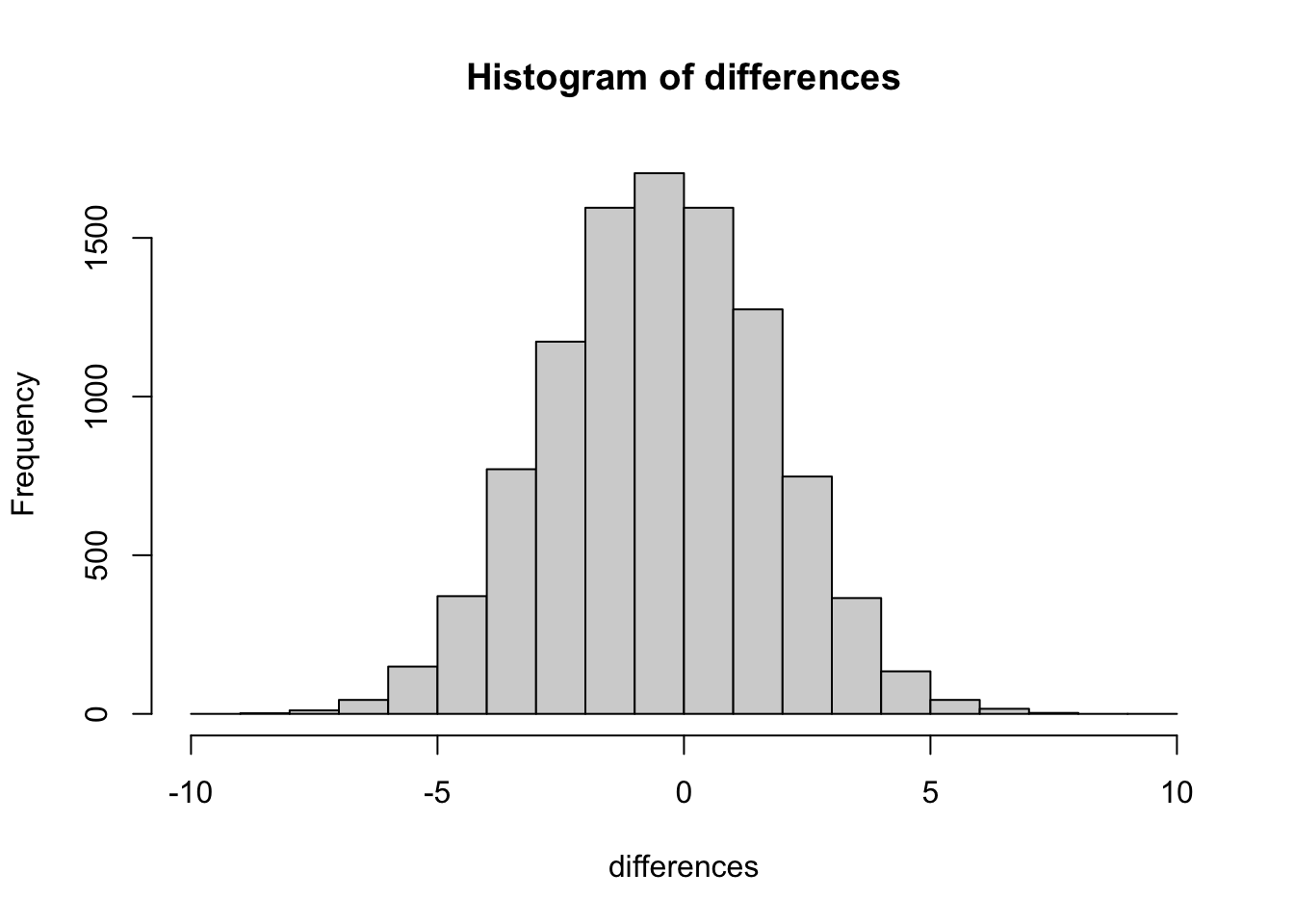

To get a clearer picture, we now run 10,000 replications and plot the distribution of difference scores as a histogram. This gives a precise view of which differences happen often and which are extremely rare.

This histogram is the chance window for this study design. It shows that chance most often produces a difference near zero — that is the tallest bar in the center. Larger differences in either direction occur less and less frequently. The distribution has a clear boundary: extreme differences are possible but very rare.

We can use this window to evaluate any observed difference. If a study found a mean pH difference of 0.1 between two groups, that falls well inside the chance window — differences of that size occur frequently by random sampling alone. If the study found a difference of 0.6, that would fall far outside the window; chance produced a difference that large 0 times out of 10,000. When an observed result almost never happens by chance, we have grounds to conclude that something other than chance produced it.

5.5 Simulation-Based Inference

We are going to be doing a lot of inference throughout the rest of this course. Pretty much all of it will come down to one question. Did chance produce the differences in my data? We will be talking about experiments mostly, and in experiments we want to know if our manipulation caused a difference in our measurement. But, we measure things that have natural variability, so every time we measure things we will always find a difference. We want to know if the difference we found (between our experimental conditions) could have been produced by chance. If chance is a very unlikely explanation of our observed difference, we will make the inference that chance did not produce the difference, and that something about our experimental manipulation did produce the difference. This is it (for this textbook).

Statistics is not only about determining whether chance could have produced a pattern in the observed data. The same tools we are talking about here can be generalized to ask whether any kind of distribution could have produced the differences. This allows comparisons between different models of the data, to see which one was the most likely, rather than just rejecting the unlikely ones (e.g., chance). But, we’ll leave those advanced topics for another textbook.

The simulation-based approach introduced here answers the same question as every formal test in later chapters: could chance alone have produced the observed difference? The advantage of starting here is transparency — every step is explicit and the logic is visible before any formulas are introduced.

5.5.1 Intuitive methods

The approach in this section uses only simple arithmetic operations that you already understand to build a tool for inference. Specifically, we will use:

- Sampling numbers randomly from a distribution

- Adding and subtracting

- Division, to find the mean

- Counting

- Graphing and drawing lines

- NO FORMULAS

5.5.2 Part 1: Frequency based intuition about occurrence

Question: How many times does something need to happen for it to happen a lot? Or, how many times does something need to happen for it to happen not very much, or even really not at all? Small enough for you to not worry about it at all happening to you?

Would you go outside every day if you thought that you would be hit by lightning 1 out of 10 times? At those odds, a person going out daily would expect to be struck more than 30 times per month. 1 in 10 is a high-risk threshold by any practical standard.

Would you go outside every day if you thought lightning would strike 1 out of every 100 days? At that rate, someone going out every day would expect roughly 3–4 strikes per year. Most people would sharply limit their outdoor exposure at 1-in-100 odds.

Would you go outside every day at 1-in-1000 odds? At that frequency, a person going outside daily would expect to be struck roughly once every 3 years. Risk accumulates meaningfully even at odds that initially seem small.

What about 1 in 10,000 days? That translates to roughly one strike every 27 years. At 1-in-10,000 odds, risk accumulates slowly — a person would expect to be struck roughly 36 times per year at 1-in-10 odds, but only once across a lifetime at 1-in-10,000 odds. The practical consequences of different probability thresholds are very different.

At 1 in 100,000 days — about 273 years — most people would not modify their behavior at all. The probability is so low as to be negligible for practical planning.

The point of considering these questions is to get a sense for yourself of what happens a lot, and what doesn’t happen a lot, and how you would make important decisions based on what happens a lot and what doesn’t.

5.5.3 Part 2: Simulating chance

This next part could happen a bunch of ways, so I’ll make some simplifying assumptions to keep things clear. We’ve already been introduced to simulating things, so we’ll do that again. Here is what we will do. Say we are an environmental scientist monitoring total suspended particulate concentrations (µg/m³) at air quality stations across a region. From prior monitoring work, we know that when we sample particulate levels across similar sites, our measurements tend to have a particular mean and standard deviation. Let’s say the mean is usually 100 µg/m³, and the standard deviation is usually 15 µg/m³. In this case, I don’t care about using these numbers as estimates of the population parameters, I’m just thinking about what my samples usually look like. What I want to know is how they behave when I sample them. I want to see what kind of samples happen a lot, and what kind of samples don’t happen a lot. Now, when we run studies to see whether something like a pollution source or land-use type changes particulate levels, we usually have a limited number of monitoring sites available. Let’s say we can instrument 20 sites in each of two treatment areas. Let’s keep the study simple, with two groups, so we will need 40 total sites.

I would like to learn something to help me with inference. One thing I would like to learn is what the sampling distribution of the sample mean looks like. This distribution tells me what kinds of mean values happen a lot, and what kinds don’t happen very often. But, I’m actually going to skip that bit. Because what I’m really interested in is what the sampling distribution of the difference between my sample means looks like. After all, I am going to run a study with 20 monitoring sites in one group and 20 in another. Then I am going to calculate the mean particulate concentration for group A and the mean for group B, and I’m going to look at the difference. I will probably find a difference, but my question is, did the environmental condition (e.g., proximity to an industrial source) cause this difference, or is this the kind of thing that happens a lot by chance? If I knew what chance can do, and how often it produces differences of particular sizes, I could look at the difference I observed, then look at what chance can do, and then I can make a decision! If my difference doesn’t happen a lot (we’ll get to how much not a lot is in a bit), then I might be willing to believe that the environmental condition caused a difference. If my difference happens all the time by chance alone, then I wouldn’t be inclined to think anything other than sampling error caused the difference.

So, here’s what we’ll do, even before running the study. We’ll do a simulation. We will sample numbers for group A and Group B, then compute the means for group A and group B, then we will find the difference in the means between group A and group B. But, we will do one very important thing. We will pretend that the two groups are actually the same — no real environmental difference between them. If we do this (do nothing, no manipulation that could cause a difference), then we know that only sampling error could cause any differences between the mean of group A and group B. We’ve eliminated all other causes, only chance is left. By doing this, we will be able to see exactly what chance can do. More importantly, we will see the kinds of differences that occur a lot, and the kinds that don’t occur a lot.

Before running the simulation, we need to define a threshold: how rare does a result need to be before we consider it unlikely to have occurred by chance? We will use 10,000 repetitions as our reference. An event that occurs 1 time out of 10,000 is considered extremely unlikely.

OK, now we have our number, we are going to simulate the possible mean differences between group A and group B that could arise by chance. We do this 10,000 times. This gives chance a lot of opportunity to show us what it does do, and what it does not do.

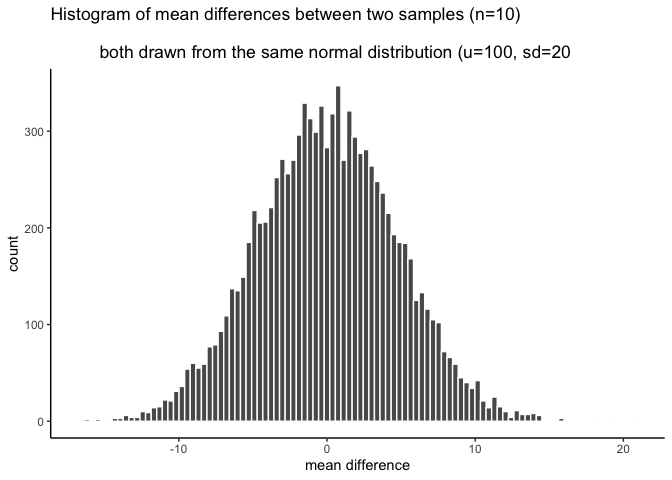

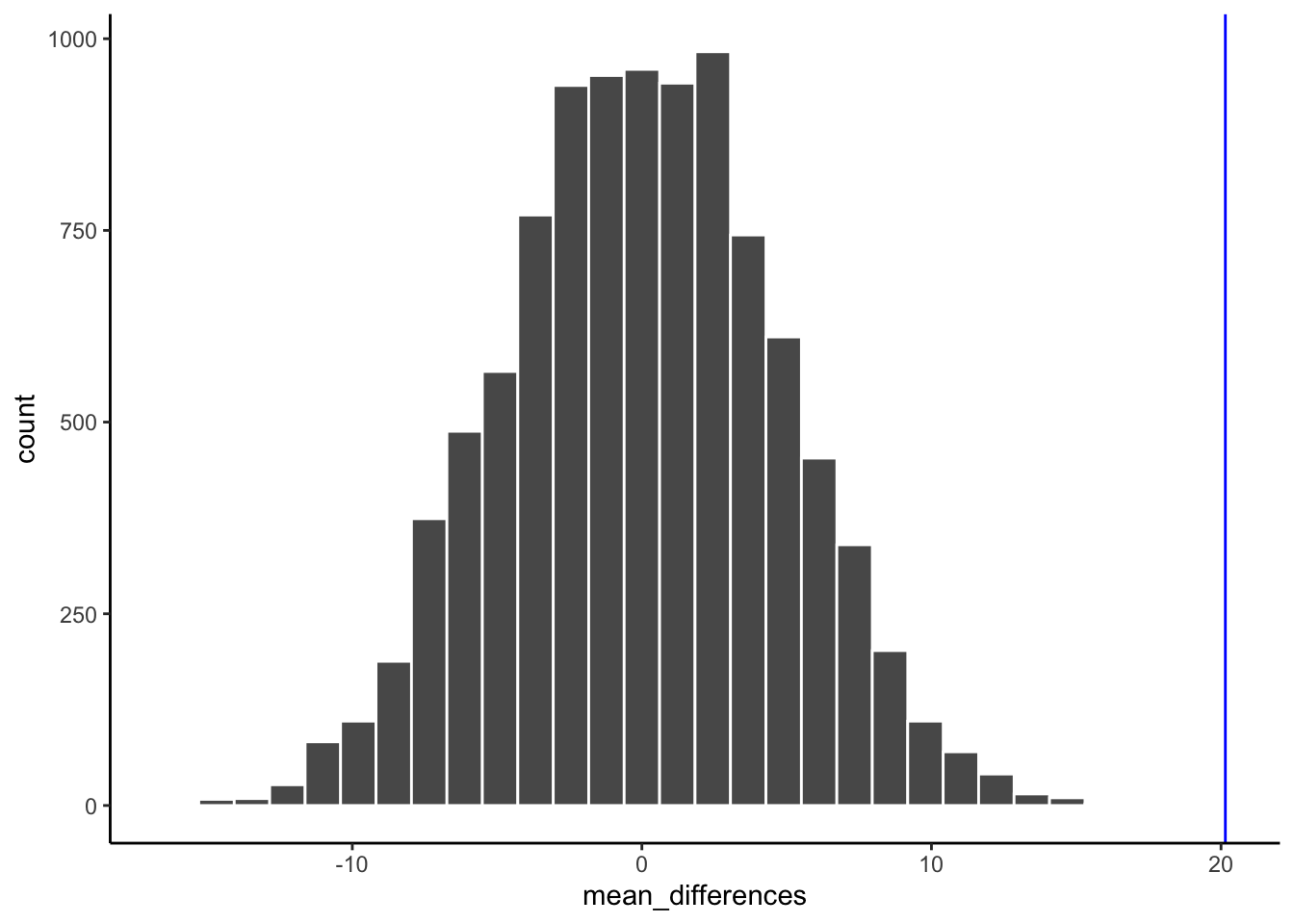

This is what I did: I sampled 20 numbers into group A (say, sites near an industrial source) and 20 into group B (background sites), but both groups are drawn from the same normal distribution — mean = 100 µg/m³, standard deviation = 15 µg/m³ — because we’re simulating the null scenario where there’s no real difference. Because the samples are coming from the same distribution, we expect that on average they will be similar (but we already know that samples differ from one another). Then, I compute the mean for each sample, and compute the difference between the means. I save the mean difference score, and end up with 10,000 of them. Then, I draw the histogram in Figure 5.16.

Of course, we might recognize that chance could do a difference greater than 15. We just didn’t give it the opportunity. We only ran the simulation 10,000 times. If we ran it a million times, maybe a difference greater than 15 or even 20 would happen a couple times. If we ran it a bazillion gazillion times, maybe a difference greater than 30 would happen a couple times. If we go out to infinity, then chance might produce all sorts of bigger differences once in a while. But, we’ve already decided that 1/10,000 is not a lot. So things that happen 0 out of 10,000 times, like differences greater than 15, are considered to be extremely unlikely.

Now we can see what chance can do to the size of our mean difference. The x-axis shows the size of the mean difference. We took our samples from the sample distribution, so the difference between them should usually be 0, and that’s what we see in the histogram.

Pause for a second. Why should the mean differences usually be zero, wasn’t the population mean = 100, shouldn’t they be around 100? No. The mean of group A will tend to be around 100, and the mean of group B will tend be around 100. So, the difference score will tend to be 100-100 = 0. That is why we expect a mean difference of zero when the samples are drawn from the same population.

So, differences near zero happen the most, that’s good, that’s what we expect. Bigger or smaller differences happen increasingly less often. Differences greater than 15 or -15 never happen at all. For our purposes, it looks like chance only produces differences between -15 to 15.

OK, let’s ask a couple simple questions. What was the biggest negative number that occurred in the simulation? We’ll use R for this. All of the 10,000 difference scores are stored in a variable I made called difference. If we want to find the minimum value, we use the min function. Here’s the result.

min(difference)

#> [1] -16.60894OK, so what was the biggest positive number that occurred? Let’s use the max function to find out. It finds the biggest (maximum) value in the variable. FYI, we’ve just computed the range, the minimum and maximum numbers in the data. Remember we learned that before. Anyway, here’s the max.

max(difference)

#> [1] 20.6813Both of these extreme values only occurred once. Those values were so rare we couldn’t even see them on the histogram, the bar was so small. Also, these biggest negative and positive numbers are pretty much the same size if you ignore their sign, which makes sense because the distribution looks roughly symmetrical.

So, what can we say about these two numbers for the min and max? We can say the min happens 1 times out of 10,000. We can say the max happens 1 times out of 10,000. Is that a lot of times? Not to me. It’s not a lot.

So, how often does a difference of 30 (much larger larger than the max) occur out of 10,000. We really can’t say, 30s didn’t occur in the simulation. Going with what we got, we say 0 out of 10,000. That’s never.

We’re about to move into part three, which involves drawing decision lines and talking about them. The really important part about part 3 is this. What would you say if you ran this experiment once, and found a mean difference of 30? I would say it happens 0 times of out 10,000 by chance. I would say chance did not produce my difference of 30. That’s what I would say. We’re going to expand upon this right now.

5.5.4 Part 3: Judgment and Decision-making

We now use the simulated distribution to make a decision about whether the observed difference is consistent with what chance alone could produce. This decision-making structure — compare your result to the distribution of results that chance generates, and judge where your result falls — is the basis for every formal hypothesis test covered in this course.

The simulation gives us a concrete foundation for that judgment. We can see which differences chance produced often, which it produced rarely, and which it never produced at all.

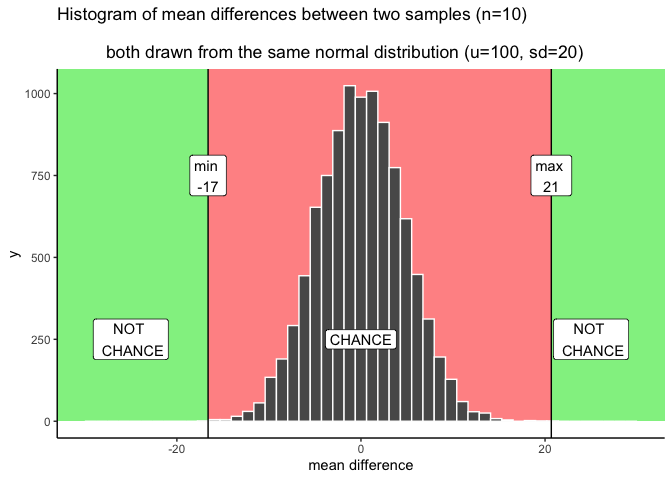

Let’s talk about some objective facts from our simulation of 10,000 things that we definitely know to be true. For example, we can draw some lines on the graph, and label some different regions. We’ll talk about two kinds of regions.

- Region of chance. Chance did it. Chance could have done it

- Region of not chance. Chance didn’t do it. Chance couldn’t have done it.

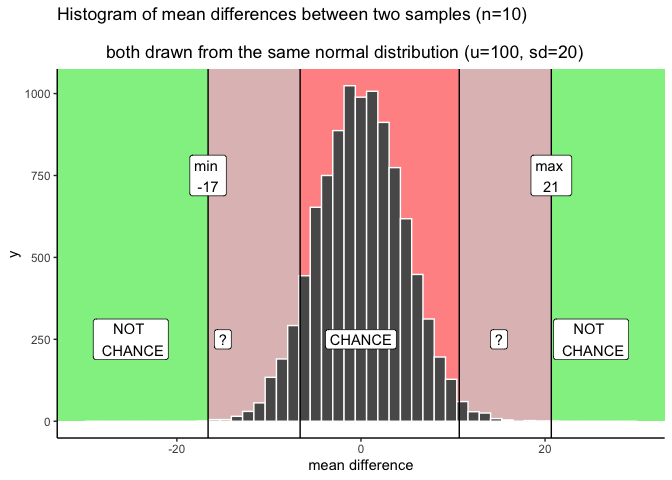

The regions are defined by the minimum value and the maximum value. Chance never produced a smaller or bigger number. The region inside the range is what chance did do, and the the region outside the range on both sides is what chance never did. It looks like Figure 5.17:

We have just drawn some lines, and shaded some regions, and made one plan we could use to make decisions. How would the decisions work. Consider a mean difference between groups A and B of 25. Where is 25 in the figure? It’s in the green part. The green region is labeled NOT CHANCE — chance never produced a difference of 25 in 10,000 simulations. If we found a difference of 25, we could confidently conclude that chance did not cause it, and have grounds to consider whether our experimental manipulation produced the effect.

A difference of +10 falls within the red region — the window of what chance did produce in the simulation. Differences inside that window could plausibly have arisen from sampling error alone. A result of +10 does not provide strong grounds for concluding that the manipulation caused the difference.

This is the core logic of statistical inference: compare your observed result to the distribution of results chance could produce. If the observed result falls outside that distribution, chance is an unlikely explanation.

A difference of +10 falls within the chance window and could plausibly be a result of random sampling. A difference of +25 falls outside the window; chance produced that value 0 times out of 10,000. In practice, we use this comparison to decide whether an observed difference warrants further investigation.

5.5.4.1 Grey areas

The clean division between “chance” and “not chance” breaks down near the edges of the distribution. We would prefer a simple yes/no answer about whether chance caused our difference, but in practice we have to acknowledge that some differences fall in ambiguous territory. ?fig-5crumpuncertainty illustrates where those grey areas are.

I made two grey areas, and they are reddish grey, because we are still in the chance window. There are question marks (?) in the grey areas. Why? The question marks reflect some uncertainty that we have about those particular differences. For example, if you found a difference that was in a grey area, say a 15. 15 is less than the maximum, which means chance did create differences of around 15. But, differences of 15 don’t happen very often.

What can you conclude or say about this 15 you found? Can you say without a doubt that chance did not produce the difference? Of course not, you know that chance could have. Still, it’s one of those things that doesn’t happen a lot. That makes chance an unlikely explanation. Instead of thinking that chance did it, you might be willing to take a risk and say that your experimental manipulation caused the difference. You’d be making a bet that it wasn’t chance…but, could be a safe bet, since you know the odds are in your favor.

The boundaries of the grey area reflect the researcher’s tolerance for uncertainty. More conservative thresholds reduce false positives; more permissive ones reduce false negatives. The formal hypothesis testing framework (covered in Chapter 6) provides a standardized approach to this trade-off.

Another thing to think about is your decision policy. What will you do, when your observed difference is in your grey area? Will you always make the same decision about the role of chance? Or, will you sometimes flip-flop depending on how you feel. Perhaps, you think that there shouldn’t be a strict policy, and that you should accept some level of uncertainty. The difference you found could be a real one, or it might not. There’s uncertainty, hard to avoid that.

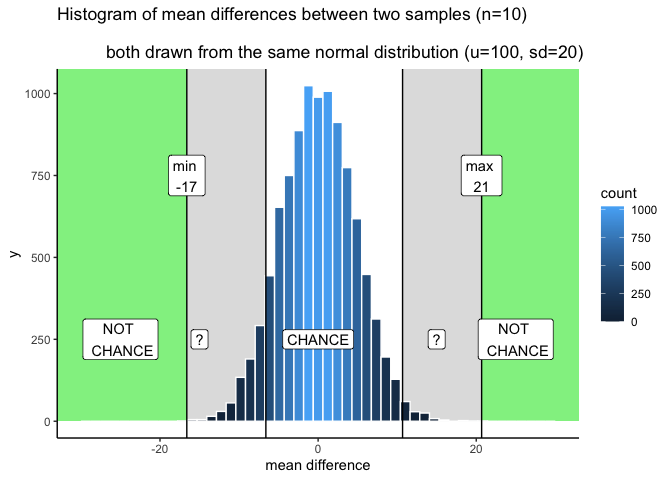

So let’s illustrate one more kind of strategy for making decisions. We just talked about one that had some lines, and some regions. This makes it seem like a binary choice: we can either rule out, or not rule out the role of chance. Another perspective is that everything is a different shade of grey, like in Figure 5.19.

OK, so I made it shades of blue (because it was easier in R). Now we can see two decision plans at the same time. Notice that as the bars get shorter, they also get become a darker stronger blue. The color can be used as a guide for your confidence. That is, your confidence in the belief that your manipulation caused the difference rather than chance. If you found a difference near a really dark bar, those don’t happen often by chance, so you might be really confident that chance didn’t do it. If you find a difference near a slightly lighter blue bar, you might be slightly less confident. That is all. You run your experiment, you get your data, then you have some amount of confidence that it wasn’t produced by chance. This way of thinking is elaborated to very interesting degrees in the Bayesian world of statistics. We don’t wade too much into that, but mention it a little bit here and there. It’s worth knowing it’s out there.

5.5.4.2 Making decisions and being wrong

No matter how you plan to make decisions about your data, you will always be prone to making some mistakes. You might call one finding real, when in fact it was caused by chance. This is called a type I error, or a false positive. You might ignore one finding, calling it chance, when in fact it wasn’t chance (even though it was in the window). This is called a type II error, or a false negative.

How you make decisions can influence how often you make errors over time. If you are a researcher, you will run lots of experiments, and you will make some amount of mistakes over time. If you do something like the very strict method of only accepting results as real when they are in the “no chance” zone, then you won’t make many type I errors. Pretty much all of your result will be real. But, you’ll also make type II errors, because you will miss things real things that your decision criteria says are due to chance. The opposite also holds. If you are willing to be more liberal, and accept results in the grey as real, then you will make more type I errors, but you won’t make as many type II errors. Under the decision strategy of using these cutoff regions for decision-making there is a necessary trade-off. The Bayesian view get’s around this a little bit. Bayesians talk about updating their beliefs and confidence over time. In that view, all you ever have is some level of confidence about whether something is real, and by running more experiments you can increase or decrease your level of confidence. This, in some fashion, avoids some trade-off between type I and type II errors.

Regardless, there is another way to reduce type I and type II errors, and to increase your confidence in your results, even before you do the experiment. It’s called “knowing how to design a good experiment”.

5.5.5 Part 4: Experiment Design

Study design directly controls the sensitivity of the analysis. It is often possible — and important — to choose a design that is sensitive to the size of effect you care about detecting.

Two forces govern whether a study can detect a real effect: the size of the effect and the amount of noise in the data. The design choices you make — primarily sample size — determine how much noise obscures the signal.

Recall what happens to the sampling distribution of the mean as sample size increases: the distribution narrows. The same is true for the distribution of mean differences. As N increases, the range of differences that chance produces shrinks. At very large N, chance produces only very small differences. This means that with a large enough sample, even small real effects can be reliably distinguished from noise.

For example, we could run our experiment with 20 subjects in each group. Or, we could decide to invest more time and run 40 subjects in each group, or 80, or 150. When you are the experimenter, you get to decide the design. These decisions matter big time. Basically, the more subjects you have, the more sensitive your experiment. With bigger N, you will be able to reliably detect smaller mean differences, and be able to confidently conclude that chance did not produce those small effects.

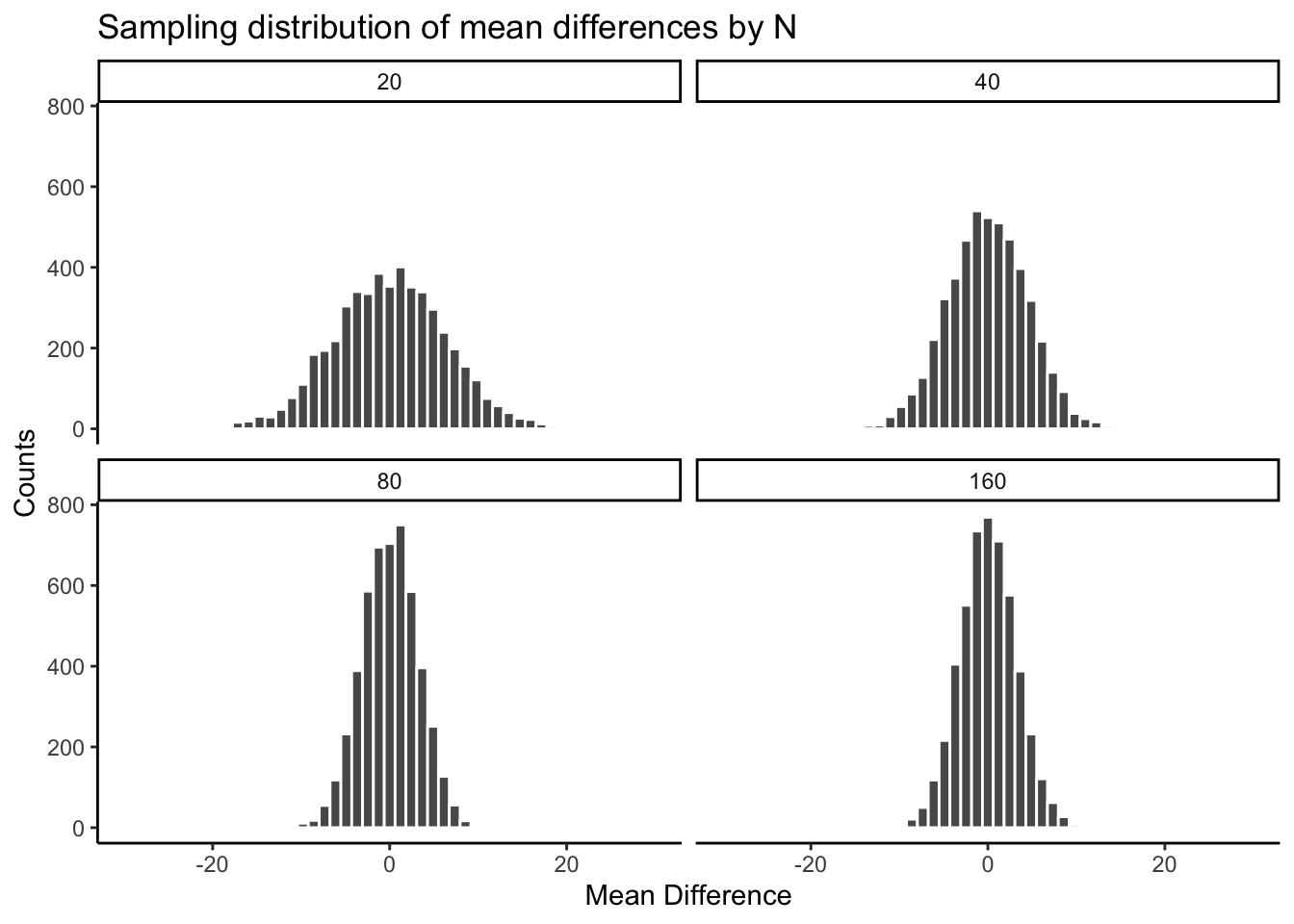

Check out the histograms in Figure 5.20. This is the same simulation as before, but with four different sample-sizes: 20, 40, 80, 160. We are doubling our sample-size across each simulation just to see what happens to the width of the chance window.

There you have it. The sampling distribution of the mean differences shrinks toward 0 as sample-size increases. This means if you run an experiment with a larger sample-size, you will be able to detect smaller mean differences, and be confident they aren’t due to chance. Table 5.2 contains the minimum and maximum values that chance produced across the four sample-sizes:

| sample_size | smallest | largest |

|---|---|---|

| 20 | -24.19486 | 21.89654 |

| 40 | -16.18592 | 15.69097 |

| 80 | -10.11355 | 10.85447 |

| 160 | -11.25008 | 11.40671 |

The table shows the range of chance behavior is very wider for smaller N and narrower for larger N. Consider what this narrowing means for your study design. For example, one aspect of the design is the choice of sample size, N — in an environmental study, this might be the number of monitoring sites, field plots, or sampling events.

If it turns out your manipulation will cause a difference of +11, then what should you do? Run an experiment with N = 20 people? I hope not. If you did that, you could get a mean difference of +11 fairly often by chance. However, if you ran the experiment with 160 people, then you would definitely be able to say that +11 was not due to chance, it would be outside the range of what chance can do. You could even consider running the experiment with 80 subjects. A +11 there wouldn’t happen often by chance, and you’d be cost-effective, spending less time on the experiment.

The point is: the design of the experiment determines the sizes of the effects it can detect. If you want to detect a small effect. Make your sample size bigger. It’s really important to say this is not the only thing you can do. You can also make your cell-sizes bigger. For example, often times we take several measurements from a single subject. The more measurements you take (cell-size), the more stable your estimate of the subject’s mean. We discuss these issues more later. You can also make a stronger manipulation, when possible.

5.5.6 Part 5: I have the power

Statistical power is the probability that a study will detect a real effect when one exists. A well-powered study is designed so that the sampling distribution of the test statistic — under the assumption that the effect is real — falls reliably outside the null distribution. The key driver of power is sample size: larger samples narrow the null distribution, making real effects easier to distinguish from chance.

We have already seen this principle in action. The number of observations in the study changes the sensitivity of the design: more observations means the distribution of chance differences shrinks, which makes it possible to detect smaller real effects.

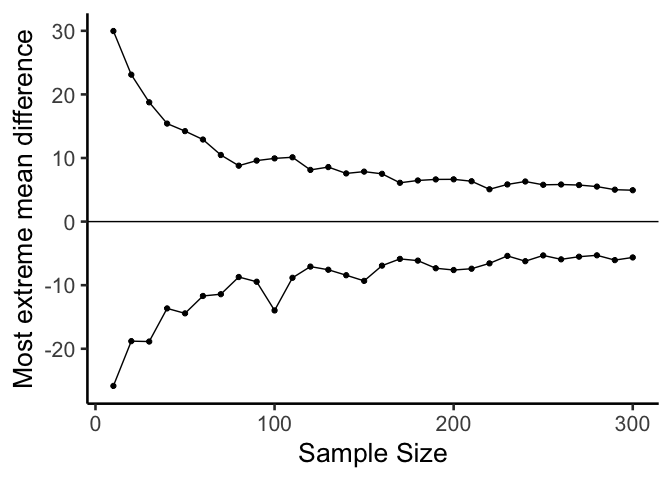

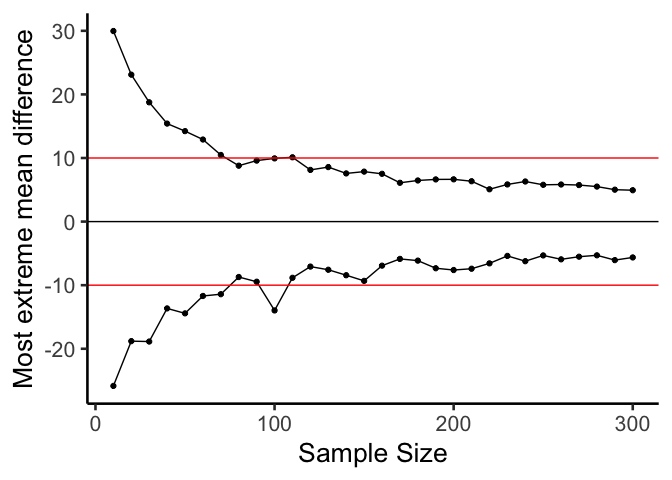

Let’s take a look at one more plot. What we will do is simulate a measure of sensitivity across a whole bunch of sample sizes, from 10 to 300. We’ll do this in steps of 10. For each simulation, we’ll compute the mean differences as we have done. But, rather than showing the histogram, we’ll just compute the smallest value and the largest value. This is a pretty good measure of the outer reach of chance. Then we’ll plot those values as a function of sample size and see what we’ve got.

Figure 5.21 shows a reasonably precise window of sensitivity as a function of sample size. For each sample size, we can see the maximum difference that chance produced and the minimum difference. In those simulations, chance never produced bigger or smaller differences. So, each design is sensitive to any difference that is underneath the bottom line, or above the top line.

Here’s another way of putting it. Which of the sample sizes will be sensitive to a difference of +10 or -10. That is, if a difference of +10 or -10 was observed, then we could very confidently say that the difference was not due to chance, because according to these simulations, chance never produced differences that big. To help us see which ones are sensitive, Figure 5.22 draws horizontal lines at -10 and +10.

Based on visual guesstimation, the designs with sample-size >= 100 are all sensitive to real differences of 10. Designs with sample-size > 100 all failed to produce extreme differences outside of the red lines by chance alone. If these designs were used, and if an effect of 10 or larger was observed, then we could be confident that chance alone did not produce the effect. Designing your experiment so that you know it is sensitive to the thing you are looking for is the big idea behind power.

5.5.7 Summary of Simulation-Based Inference

What did we learn from this simulation-based approach? We learned the basics of what we’ll be doing moving forward — and we did it all without any hard math or formulas. We sampled numbers, computed means, subtracted means between conditions, then repeated that process many times and counted up the mean differences and put them in a histogram. This showed us what chance can do in a study. Then, we discussed how to make decisions around these facts. And, we showed how we can control the role of chance just by changing things like sample size.

5.6 The randomization test (permutation test)

Welcome to the first official inferential statistic in this textbook. Up till now we have been building some intuitions for you. Next, we will get slightly more formal and show you how we can use random chance to tell us whether our experimental finding was likely due to chance or not. We do this with something called a randomization test. The ideas behind the randomization test are the very same ideas behind the rest of the inferential statistics that we will talk about in later chapters. And, surprise, we have already talked about all of the major ideas already. Now, we will just put the ideas together, and give them the name randomization test.

Here’s the big idea. When you run an experiment and collect some data you get to find out what happened that one time. But, because you ran the experiment only once, you don’t get to find out what could have happened. The randomization test is a way of finding out what could have happened. And, once you know that, you can compare what did happen in your experiment, with what could have happened.

5.6.1 Pretend example: does distance from a highway affect NO₂ levels?

Let’s say you are monitoring nitrogen dioxide (NO₂) concentrations at air quality sites near a major highway. You assign 20 monitoring sites to a “near” group (within 50 m of the highway) and 20 different sites to a “far” group (500 m or more from the highway). If highway traffic is a meaningful source of NO₂, then the near group should have higher concentrations on average than the far group.

Let’s say the data looked like this:

| site | near_ppb | far_ppb |

|---|---|---|

| 1 | 55 | 33 |

| 2 | 53 | 27 |

| 3 | 49 | 12 |

| 4 | 58 | 17 |

| 5 | 63 | 30 |

| 6 | 60 | 33 |

| 7 | 54 | 18 |

| 8 | 55 | 32 |

| 9 | 63 | 13 |

| 10 | 59 | 16 |

| 11 | 46 | 19 |

| 12 | 57 | 26 |

| 13 | 59 | 13 |

| 14 | 38 | 31 |

| 15 | 38 | 21 |

| 16 | 40 | 33 |

| 17 | 62 | 26 |

| 18 | 39 | 29 |

| 19 | 46 | 13 |

| 20 | 38 | 31 |

| Sums | 1032 | 473 |

| Means | 51.6 | 23.65 |

So, did the near-highway sites have higher NO₂ than the far sites? Look at the mean concentrations at the bottom of the table. The mean for near sites was 51.6 ppb, and the mean for far sites was 23.65 ppb. Just looking at the means, it looks like proximity to the highway matters!

“But wait — could this just be chance?” Even if this were real data, you might wonder: maybe the near sites just happened to be in a windier or more industrialized area, so their higher readings reflect those conditions rather than the highway itself. Or maybe the difference between the groups happened simply because of random sampling variation. We agree this is a legitimate concern. Let’s take a closer look. We already know how the data came out. What we want to know is how they could have come out — what are all the possibilities?

For example, the data would have come out a bit different if some of the sites from the near group had been placed in the far group, and vice versa. Think of all the ways you could have assigned the 40 sites to two groups — there are lots of ways. And, the means for each group would turn out differently depending on how the sites are assigned.

Practically speaking, it’s not possible to run the experiment every possible way, that would take too long. But, we can nevertheless estimate how all of those experiments might have turned out using simulation.

We take all 40 NO₂ readings from both groups and pool them together. Then we randomly assign 20 to a ‘near’ group and 20 to a ‘far’ group — as if the original assignment had been done differently. We compute the new group means and their difference. We repeat this process many times to build up a picture of what the difference could have been under random assignment.

5.6.1.1 Doing the randomization

Before we do that, let’s show how the randomization part works. We’ll use fewer numbers to make the process easier to look at. Here are the first 5 NO₂ readings from each group.

| site | near_ppb | far_ppb |

|---|---|---|

| 1 | 55 | 33 |

| 2 | 53 | 27 |

| 3 | 49 | 12 |

| 4 | 58 | 17 |

| 5 | 63 | 30 |

| Sums | 278 | 119 |

| Means | 55.6 | 23.8 |

Things could have turned out differently if some of the near-highway sites had been placed in the far group instead, and vice versa. Here’s how we can do some random switching using R.

all_readings <- c(near[1:5], far[1:5])

randomize_scores <- sample(all_readings)

new_near <- randomize_scores[1:5]

new_far <- randomize_scores[6:10]

print(new_near)

#> [1] 58 55 33 49 12

print(new_far)

#> [1] 63 53 27 30 17We have taken the first 5 readings from each group and put them all into a variable called all_readings. Then we use the sample function in R to shuffle the readings. Finally, we take the first 5 readings from the shuffled numbers and put them into new_near, and the last five into new_far.

If we do this a couple of times and put them in a table, we can indeed see that the means for each group would be different if the sites were shuffled around. Check it out:

| site | near_ppb | far_ppb | near2 | far2 | near3 | far3 |

|---|---|---|---|---|---|---|

| 1 | 55 | 33 | 33 | 27 | 27 | 17 |

| 2 | 53 | 27 | 53 | 55 | 33 | 58 |

| 3 | 49 | 12 | 17 | 12 | 49 | 53 |

| 4 | 58 | 17 | 58 | 63 | 30 | 63 |

| 5 | 63 | 30 | 49 | 30 | 12 | 55 |

| Sums | 278 | 119 | 210 | 187 | 151 | 246 |

| Means | 55.6 | 23.8 | 42 | 37.4 | 30.2 | 49.2 |

5.6.1.2 Simulating the mean differences across the different randomizations

In our pretend study we found that the mean NO₂ for near-highway sites was 51.6 ppb, and for far sites was 23.65 ppb. The mean difference (near − far) was 27.95 ppb. This is a pretty big difference. This is what did happen. But, what could have happened? If we tried out all the possible ways to assign 40 sites to two groups, what does the distribution of the possible mean differences look like? Let’s find out. This is what the randomization test is all about.

When we do our randomization test we will measure the mean difference in NO₂ between the near and far groups. Every time we randomize we will save the mean difference.

Let’s look at a short animation of what is happening in the randomization test. Figure 5.23 shows data from a different fake study, but the principles are the same. We’ll return to the NO₂ example after the animation. The animation is showing three important things. First, the purple dots show the mean values in two groups (lower-exposure vs. higher-exposure sites). It looks like there is a difference, as 1 dot is lower than the other. We want to know if chance could produce a difference this big. At the beginning of the animation, the light green and red dots show the individual readings from each of 10 sites in each group (the purple dots are the means of these original readings). Now, during the randomizations, we randomly shuffle the original readings between the groups. You can see this happening throughout the animation, as the green and red dots appear in different random combinations. The moving yellow dots show you the new means for each group after the randomization. The differences between the yellow dots show you the range of differences that chance could produce.

We are engaging in some visual statistical inference. By looking at the range of motion of the yellow dots, we are watching what kind of differences chance can produce. In this animation, the purple dots, representing the original difference, are generally outside of the range of chance. The yellow dots don’t move past the purple dots, as a result chance is an unlikely explanation of the difference.

If the purple dots were inside the range of the yellow dots, then when would know that chance is capable of producing the difference we observed, and that it does so fairly often. As a result, we should not conclude the manipulation caused the difference, because it could have easily occurred by chance.

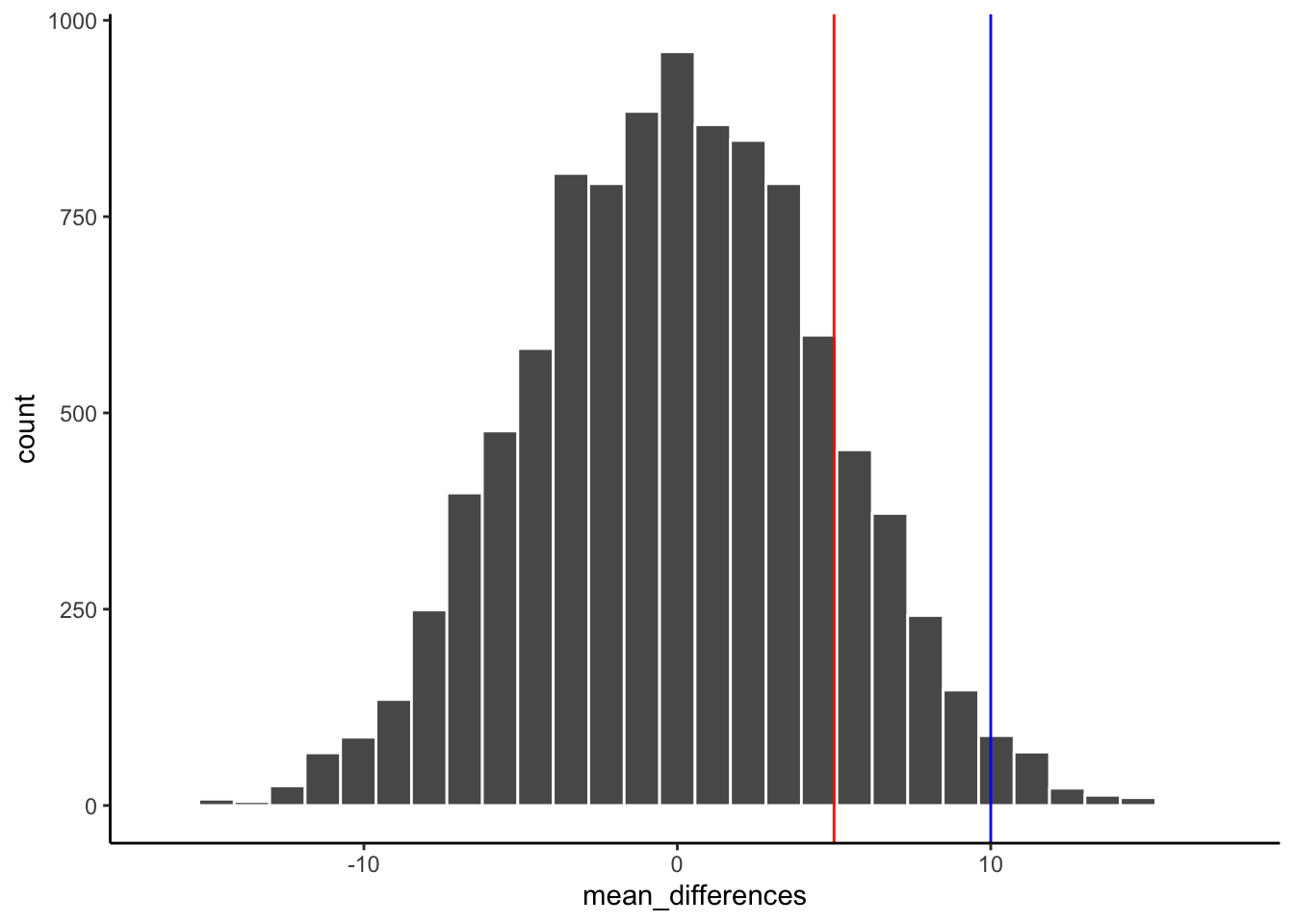

Let’s return to the NO₂ example. After we randomize our readings many times and compute the new means and mean differences, we will have loads of mean differences to look at, which we can plot in a histogram. The histogram gives a picture of what could have happened. Then, we can compare what did happen with what could have happened.

Here’s the histogram of the mean differences from the randomization test. For this simulation, we randomized the results from the original study 10,000 times. This is what could have happened. The blue line in Figure 5.24 shows where the observed difference lies on the x-axis.

What do you think? Could the difference represented by the blue line have been caused by chance? My answer is probably not. The histogram shows us the window of chance — the range of differences that random assignment alone can produce. The blue line is not inside that window. This means we can be pretty confident that the difference we observed was not due to chance alone, and is more consistent with a real environmental effect of highway proximity on NO₂.

We are looking at another window of chance. We are seeing a histogram of the kinds of mean differences that could have occurred in our study if we had randomly assigned our 40 sites to the near and far groups differently. As you can see, the mean differences range from negative to positive. The most frequent difference is 0. The distribution appears to be symmetrical about zero. Notice that as the differences get larger (in either direction), they become less frequent. The blue line shows us the observed difference from our study. Where is it? It’s way out to the right — well outside the histogram. In other words, when we look at what could have happened by chance, we see that what did happen doesn’t occur very often by chance alone.

Important: When we speak of what could have happened, we are talking about what could have happened by chance. When we compare what did happen to what chance could have done, we can assess whether our result is likely due to chance or something real.

OK, let’s pretend we got a much smaller mean difference when we first ran the study. We can draw new lines (blue and red) to represent smaller differences we might have found.

Look at the blue line in Figure 5.25. If you found a mean difference of 10 ppb, would you be convinced that this wasn’t caused by chance? As you can see, the blue line is inside the chance window — differences of +10 ppb do happen sometimes by chance. You might infer that the difference was probably not due to chance (it’s on the edge), but you’d be a little skeptical. How about the red line? The red line represents a difference of +5 ppb. If you found a difference of +5 here, would you be confident that it wasn’t caused by chance? No — the red line is well inside the chance window, and this kind of difference happens fairly often. You’d need more evidence before concluding that highway proximity was driving the difference.

5.6.2 Take homes so far

The simulation-based approach developed in this chapter illustrates the core logic of all the formal tests covered later in this course.

Inferential statistics is an attempt to solve the problem: where did my data come from? In the randomization test example, our question was: where did the differences between the means in my data come from? We know that the differences could be produced by chance alone. We simulated what chance can do using randomization. Then we plotted what chance can do using a histogram. Then, we used that picture to help us make an inference. Did our observed difference come from the chance distribution, or not? When the observed difference is clearly inside the chance distribution, then we can infer that our difference could have been produced by chance. When the observed difference is not clearly inside the chance distribution, then we can infer that our difference was probably not produced by chance.

These pictures are very, very helpful. If one of our goals is to summarize a bunch of complicated numbers and arrive at an inference, the pictures do a great job. We don’t even need a summary number — we just need to look at the picture and see if the observed difference is inside or outside of the chance window. As we move forward, the main thing we will do is formalize this process and talk more about “standard” inferential statistics. For example, rather than looking at a picture, we will create summary numbers. What if you wanted the probability that your difference could have been produced by chance? That could be a single number. If there was a 95% probability that chance could produce the difference you observed, you might not be very confident that your environmental condition was causing the difference. If there was only 1% probability that chance could produce your difference, you might be much more confident that chance did not produce it — and that your variable of interest (highway proximity, pollution source, land use type) actually matters. In order to get there, we will introduce some more foundational tools for statistical inference.